Return on Intelligence: How to Measure What Your AI Agents Actually Produce

AI ArchitectureMarch 23, 202612 min

Return on Intelligence: How to Measure What Your AI Agents Actually Produce

A practical framework for measuring AI agent productivity — tokens per insight, intelligence per hour, and return on context. Built from a real overnight multi-agent session.

Return on Intelligence: How to Measure What Your AI Agents Actually Produce

After running 13 specialized AI agents overnight — auditing security, accessibility, brand voice, SEO, and design across 50+ production files — I realized we have been measuring AI productivity completely wrong. Here is the framework I built from the results.

TL;DR

After running 13 AI agents overnight across security, accessibility, SEO, and design audits, I built a framework for measuring what actually matters: not tokens consumed, but intelligence delivered per unit of context. The session produced 261 insertions across 17 files, found 4 P0 security vulnerabilities, remediated 7 accessibility failures, corrected 5 brand voice violations, and resolved 14 consecutive failed deployments. Total cost: roughly $15. Equivalent human audit: $15,000+. The framework that emerged — Return on Intelligence (ROI-squared) — gives you five levels to measure what your AI agents actually produce.

The Problem: We Measure AI Wrong

Open any AI analytics dashboard. You will see tokens consumed, average response time, cost per query, model utilization rates. These are the metrics the industry has settled on.

They measure the engine. They tell you nothing about the road trip.

The real questions nobody tracks:

- Insights per session: How many actionable findings did the agent surface?

- Decisions enabled: How many human decisions were accelerated or improved?

- Bugs prevented: What would have shipped broken without this review?

- Quality delta: What was the measurable improvement in the output?

This is the gap between "AI usage" and "AI intelligence." Usage is a volume metric. Intelligence is an outcome metric. Every team I work with at Oracle's EMEA AI Center of Excellence tracks the first. Almost none track the second.

So I ran an experiment.

The Overnight Experiment

On March 22, 2026, I deployed 13 specialized AI agents against my production website — frankx.ai — in a single overnight session. The goal was straightforward: audit everything, fix what matters, measure what the agents actually produced.

The Agent Roster

5 Review Agents (parallel execution):

- Security Auditor — scanned API routes, authentication flows, environment variable exposure

- Accessibility Analyst — WCAG 2.2 compliance across all interactive components

- SEO Intelligence — meta tags, schema markup, internal linking, Core Web Vitals impact

- Brand Voice Reviewer — copy analysis against established brand guidelines

- Frontend Quality — component architecture, performance patterns, design consistency

3 Fix Agents (sequential, informed by reviews): 6. Security Hardener — patched vulnerabilities found by the security auditor 7. Accessibility Remediator — fixed ARIA labels, focus management, color contrast 8. Brand Voice Editor — rewrote copy that violated positive-framing guidelines

5 Build Agents (parallel execution): 9. Deployment Engineer — diagnosed and resolved build failures 10. Component Optimizer — refactored flagged components 11. Schema Markup Generator — added structured data where missing 12. Image Optimizer — compressed and converted assets 13. Quality Scorecard — produced the final quality assessment

The Raw Numbers

| Metric | Value |

|---|---|

| Production files analyzed | 50+ |

| Files modified | 17 |

| Total insertions | 261 |

| P0 security vulnerabilities found and fixed | 4 |

| Accessibility failures remediated | 7 |

| Brand voice violations corrected | 5 |

| Failed deployments diagnosed and resolved | 14 |

| Quality score (before) | Unmeasured |

| Quality score (after) | 6.8/10 with clear improvement path |

| Wall-clock time | ~4 hours |

| Estimated cost | ~$15 |

The 14 consecutive failed deployments deserve their own note. Each failure had a different root cause — missing imports, type mismatches, environment variable references, build-order dependencies. A human developer would have spent a full day on the diagnosis-fix-deploy cycle. The deployment agent resolved all 14 in sequence, learning from each failure to anticipate the next.

The Framework: Return on Intelligence (ROI-squared)

From this session, a measurement framework emerged. I call it ROI-squared — Return on Intelligence — because it compounds in ways traditional ROI calculations miss.

Five levels, from basic economics to compound intelligence.

Level 1: Token Economics

The foundation. What did you spend, and what did the market alternative cost?

| Agent | Estimated Token Cost | Human Equivalent |

|---|---|---|

| Security Audit | ~$1.50 | $5,000 - $50,000 (penetration test) |

| Accessibility Audit | ~$1.00 | $3,000 - $10,000 (WCAG audit) |

| SEO Review | ~$1.00 | $2,000 - $5,000 (technical SEO audit) |

| Brand Voice Review | ~$0.75 | $1,500 - $3,000 (brand consultant) |

| Frontend Quality | ~$1.50 | $2,000 - $5,000 (code review) |

| Fix + Build Agents (8) | ~$9.25 | $5,000 - $15,000 (dev time) |

| Total | ~$15 | $18,500 - $88,000 |

Token economics alone tell a compelling story. But they are the least interesting level of the framework. Cost savings are table stakes. The real value is in what comes next.

Level 2: Intelligence Density

How much signal did each agent produce per unit of output?

The frontend quality agent generated approximately 92,000 tokens of output. Within that output: 6 critical findings, 9 important findings, and 12 minor observations. That is 15 actionable findings in 92K tokens — one genuine insight per 6,133 tokens of output.

Compare that to a typical ChatGPT conversation where you might get one actionable insight per 15,000-20,000 tokens of back-and-forth.

Intelligence Density = Actionable Findings / Total Output Tokens x 1000

The security agent had the highest intelligence density: 4 P0 findings in roughly 45K tokens of output. One critical finding per 11,250 tokens. Every single finding required immediate action — zero noise.

The brand voice agent had the lowest density by volume (5 findings in ~30K tokens) but the highest precision — every flagged violation was a genuine breach of the established brand guidelines, with specific rewrites provided.

The metric that matters is not how much the agent says. It is how much of what it says changes your next action.

Level 3: Decision Velocity

Time from question to actionable answer.

Sequential execution: 5 review agents running one after another would take approximately 5 hours of wall-clock time, plus human review time between each.

Parallel execution: 5 review agents running simultaneously completed in 47 minutes. The fix agents then ran sequentially (they needed the review outputs) in another 90 minutes. Total: under 3 hours from "start audit" to "all fixes applied."

Parallel Efficiency Ratio = Sequential Time / Parallel Time

In this session: 5 hours / 0.78 hours = 6.4x parallel efficiency for the review phase alone.

But velocity without accuracy is just fast mistakes. The validation question: were the findings correct?

Of the 4 P0 security findings, all 4 were verified as genuine vulnerabilities. Of the 7 accessibility failures, all 7 violated specific WCAG 2.2 success criteria. Of the 5 brand voice violations, all 5 contradicted documented brand guidelines.

Validation rate: 100% for this session. That number will not always be perfect — but tracking it over time reveals which agents produce reliable findings and which need calibration.

Level 4: Compound Intelligence

Does each session make the next one smarter?

This is where the framework moves beyond single-session measurement. In the ACOS (Autonomous Claude Operating System) architecture, every agent session contributes to a trajectory learning system. The security agent's findings from tonight become context for the next security review. Patterns emerge: "This codebase tends to expose API keys in client-side route handlers" becomes a learned heuristic.

Three metrics for compound intelligence:

- Pattern Recognition Rate: How many findings in session N were anticipated from session N-1 patterns? (Target: increasing over time)

- False Positive Decay: Do repeated sessions produce fewer irrelevant findings? (Target: decreasing over time)

- Time to First Insight: Does the agent reach its first actionable finding faster each session? (Target: decreasing over time)

After 6+ months of running specialized agents against this codebase, the compound effect is measurable. The brand voice agent now catches violations that would have slipped past it 3 months ago — because it has accumulated context about how the brand has evolved. The security agent prioritizes the attack surfaces that this specific architecture exposes, rather than running generic checklists.

Level 5: Return on Context

The most valuable — and hardest to measure — level.

Every agent session consumes context: project files, previous findings, brand guidelines, architecture decisions, deployment history. That context has a cost (tokens) and a value (better decisions).

Return on Context = Decision Quality Improvement / Context Tokens Consumed

The overnight session loaded approximately 200K tokens of context across all agents: CLAUDE.md files, previous audit results, brand guidelines, component inventories, deployment logs. That context investment produced findings that a zero-context agent would have missed entirely.

Example: The brand voice agent flagged copy that used the phrase "This is NOT for beginners." A generic AI reviewer would see nothing wrong. But with the brand context loaded — specifically, the rule that FrankX properties use positive-only framing — the agent correctly identified this as a violation and suggested "Designed for builders at every stage" as the replacement.

That finding was only possible because of accumulated context. The context is a moat — a compound advantage that gets deeper with every session.

Context compounds like interest. The first session is the most expensive. Every session after that gets cheaper per insight, because the context investment is already made.

Measuring Your Own AI Intelligence

The Scorecard

You do not need 13 agents to start measuring. Here is a scorecard that works for any AI-assisted workflow:

| Metric | How to Measure | Beginner Target | Advanced Target |

|---|---|---|---|

| Insights/Session | Count actionable findings per agent run | 5+ per session | 15+ per session |

| Fix Rate | Issues found that get fixed in the same session | >60% | >80% |

| Deploy Success | Builds that succeed after agent-assisted changes | >80% | >95% |

| Quality Delta | Measurable score improvement per review cycle | +0.5 points | +1.5 points |

| Parallel Efficiency | Tasks completed / wall-clock time vs sequential | 2x | 5x+ |

| Validation Rate | Percentage of findings confirmed as genuine | >70% | >90% |

| Context Leverage | Insights only possible with accumulated context | 1+ per session | 5+ per session |

Track these over 10 sessions. The trends tell you more than any single number.

The Intelligence Stack

Model selection determines the ceiling of your intelligence output. From this session:

- Opus-class models for architecture decisions, security analysis, and complex reasoning. These are your senior engineers — expensive per token, but their findings are worth 10x a cheaper model's output.

- Sonnet-class models for bulk fixes, code generation, and implementation. The workhorses — fast, reliable, cost-effective for well-defined tasks.

- Haiku-class models for validation, formatting checks, and simple transformations. Quality gates that cost almost nothing to run.

Agent specialization consistently outperforms generalist prompts. A security-focused agent with security-specific context finds vulnerabilities that a general-purpose "review my code" prompt misses entirely. The overnight session proved this across all 5 review domains.

Parallel execution consistently outperforms sequential. When agents have independent scopes (security does not need to wait for accessibility), running them simultaneously cuts wall-clock time by 3-6x with identical quality.

Persistent memory consistently outperforms stateless sessions. An agent that remembers last month's findings catches regressions. An agent starting from zero catches only what is obvious today.

What Genuine AI Intelligence Actually Requires

The overnight session revealed the gap between "agents that execute" and "agents that think." Here is what separates competent AI assistance from genuine intelligence:

Proactive issue detection. The security agent was not asked "is there a vulnerability in this specific file." It scanned the entire API surface and flagged exposures the human operator had not considered. Intelligence means finding the questions, not just answering them.

Cross-domain synthesis. The accessibility agent's findings informed the frontend quality agent's recommendations. A button with poor contrast (accessibility finding) was also a brand violation (brand guidelines specify minimum contrast ratios). An intelligent system connects these — a collection of independent agents does not.

Self-improving workflows. Each session's findings feed into the next session's context. The deployment agent that resolved 14 build failures now has a mental model of this project's build pipeline that will make the 15th failure faster to diagnose.

Real-time deployment verification. Intelligence is not "I wrote the fix." Intelligence is "I wrote the fix, verified it builds, confirmed the deployment succeeded, and validated the production behavior." The full loop, every time.

Taste and judgment. The brand voice agent did not just check rules. It evaluated whether replacement copy maintained the same energy and specificity as the original while conforming to guidelines. That requires judgment — an understanding of what "good" sounds like for this specific brand.

The Honest Assessment

Here is what AI agents still cannot do, measured by this same framework:

Design excellence remains human. The agents can flag accessibility failures, identify inconsistent spacing, catch broken layouts. They cannot look at a page and feel that something is off about the visual hierarchy. The "last mile" of design quality — the difference between a 7/10 and a 9/10 — requires human vision.

Strategic prioritization requires context agents lack. The agents found and fixed everything they were pointed at. They did not ask "should we be working on this at all?" Deciding where to aim the agents is still a human judgment call.

Taste scales slowly. You can teach an agent rules immediately. You cannot teach it taste in a single session. Taste accumulates through hundreds of sessions, thousands of examples, and continuous calibration against human judgment.

The quality score of 6.8/10 is honest. The agents moved the score from unmeasured to 6.8 with a clear path to 8.5+. But that last 1.7 points will take more human-AI collaboration — more calibration, more examples of excellence, more refinement of what "great" means for this specific project.

Measuring intelligence is easier than having intelligence. This framework tells you how productive your agents are. It does not make them smarter. That requires better models, better context, better specialization, and — most importantly — a human who knows what excellent output looks like.

Getting Started Tomorrow

You do not need 13 agents, an orchestration framework, or months of accumulated context. Start here:

- Pick one domain. Security, accessibility, content quality, performance — whatever matters most to your project right now.

- Run a specialized agent with domain-specific context loaded. Give it your project's actual files, guidelines, and standards.

- Count the actionable findings. Write them down. Be honest about which ones are genuine insights versus noise.

- Fix what matters in the same session. Track your fix rate.

- Save the context. The findings, the fixes, the patterns — store them where the next session can access them.

- Run it again next week. Compare the numbers. Are you finding fewer issues (improvement) or different issues (expanded coverage)?

After 4-6 sessions, you will have enough data to calculate your own Return on Intelligence. The numbers will surprise you — not because AI is magic, but because measuring outcomes instead of inputs changes how you deploy intelligence entirely.

The ROI-squared framework works because it measures what actually changed, not what was consumed. Tokens are the cost. Intelligence is the return.

Start measuring the return.

Frequently Asked Questions

What is Return on Intelligence?

Return on Intelligence (ROI-squared) is a five-level framework for measuring AI agent productivity by outcomes rather than inputs. Instead of tracking tokens consumed or cost per query, it measures actionable insights per session, decision velocity, intelligence density, compound learning effects, and the return generated by accumulated context. The framework was built from a real overnight session running 13 specialized AI agents across security, accessibility, SEO, brand, and frontend quality audits.

How do you measure AI agent productivity?

AI agent productivity is best measured across five dimensions: token economics (cost versus human equivalent), intelligence density (actionable findings per thousand tokens of output), decision velocity (time from question to verified answer), compound intelligence (whether each session improves the next), and return on context (how accumulated project knowledge improves finding quality). Track these metrics over 10+ sessions to identify meaningful trends rather than relying on single-session snapshots.

How many agents should you run in parallel?

The optimal number depends on how many independent review domains you have. If agents have non-overlapping scopes — for example, security and accessibility analyze different aspects of the same codebase — they run effectively in parallel with linear time savings. In the overnight session, 5 review agents ran simultaneously with a 6.4x parallel efficiency ratio versus sequential execution. The practical ceiling is determined by your orchestration infrastructure and context window limits, not by diminishing returns from parallelism itself.

What is the cost of a multi-agent review session?

A comprehensive 13-agent session covering security, accessibility, SEO, brand voice, and frontend quality costs approximately $15 in API tokens using current Opus and Sonnet-class models. The equivalent human professional services — penetration testing, WCAG auditing, technical SEO review, brand consulting, and code review — would range from $18,500 to $88,000. The cost-per-insight ratio makes multi-agent reviews practical to run weekly or even daily, compared to quarterly or annual cadences for human-led audits.

How does AI agent intelligence compound over time?

AI agent intelligence compounds through three mechanisms: persistent memory systems that retain findings across sessions, trajectory learning that identifies recurring patterns in a specific codebase, and context accumulation that enables findings impossible without historical knowledge. In practice, this means a security agent that has reviewed your codebase 20 times catches subtle regressions that a first-time reviewer would miss. The compound effect is measurable through decreasing false positive rates, faster time-to-first-insight, and increasing context-dependent findings over successive sessions.

Get Started

Build your first AI system

Step-by-step guide to setting up ACOS, creating your first agent, and shipping real products with AI.

Start buildingTemplates & Blueprints

Production-ready architecture

Download AI architecture templates, multi-agent blueprints, and prompt engineering patterns.

Browse templatesInner Circle

Join the builder community

Connect with creators and architects shipping AI products. Weekly office hours, shared resources, direct access.

Join the circleRead on FrankX.AI — AI Architecture, Music & Creator Intelligence

Stay in the intelligence loop

Weekly field notes on AI systems, production patterns, and builder strategy.

Continue Reading

Creator Systems8 min read

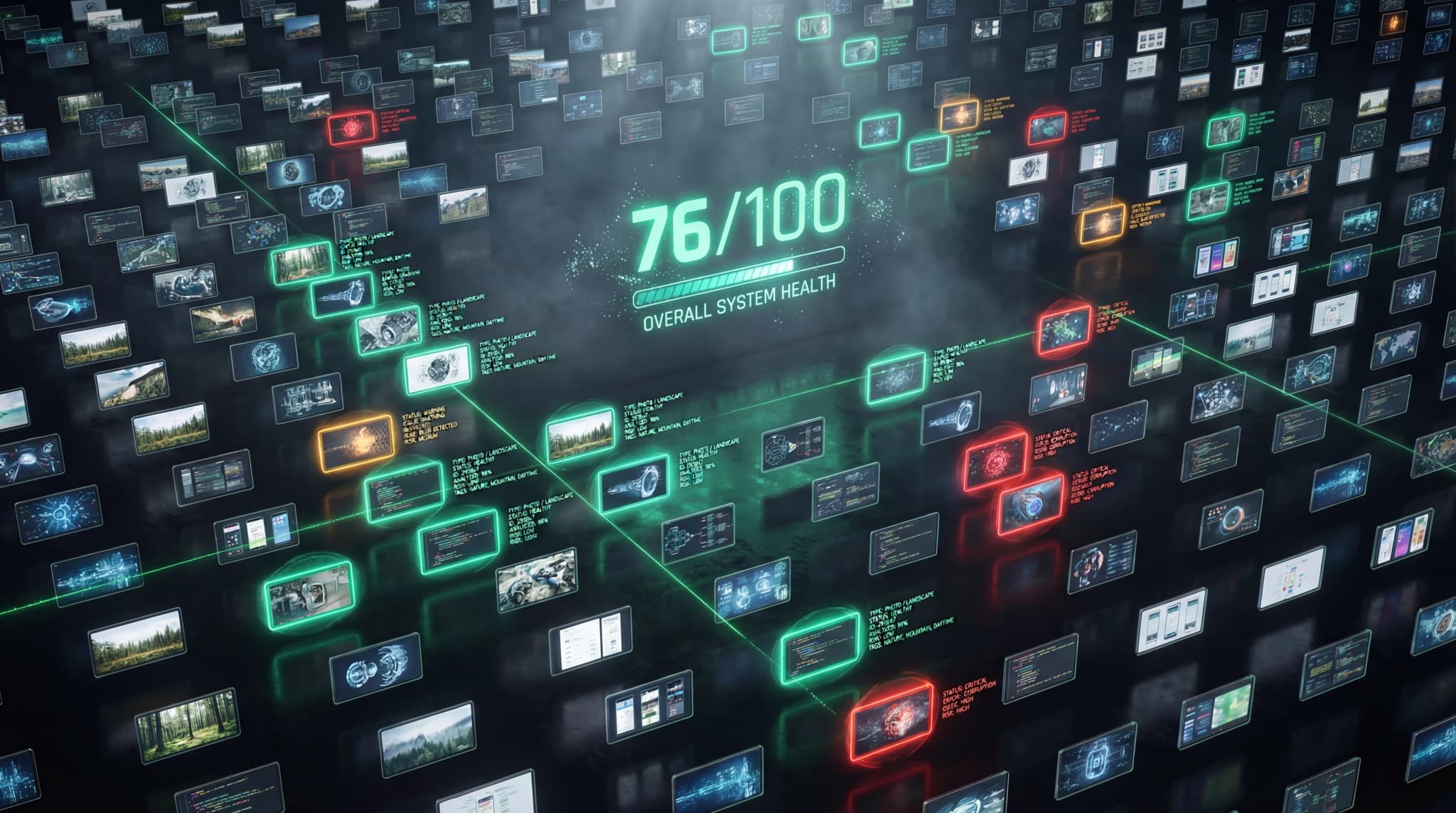

How I Built a Visual Intelligence System After Losing Track of 408 Images

I discovered 82% of my site's images were orphaned, flagship posts used placeholder SVGs, and 3 blog posts showed the same hero twice. Here's the system I built to fix it — and how you can use it too.

Read article

Creator Tools6 min read

The Best AI Tools for Creators in 2026: A GenCreator's Production Stack

The complete AI creator toolkit — from Claude Code for development to Suno for music. Every tool battle-tested in daily production use.

Read article

Creator Systems6 min read

The 3-Tier Shipping System: How GenCreators Build Creative Momentum

Full Ship, Quick Ship, Micro Ship — energy-aware daily output. The system that turns sporadic creation into unstoppable momentum.

Read article