Reality Architecture: Imagination to Product, Faster with AI

Intelligence DispatchesMarch 15, 202611 min read

Reality Architecture: Imagination to Product, Faster with AI

Neuroscience of imagination meets generative AI. The 5-phase framework for turning mental models into shipped products — at machine speed.

🎯

Reading Goal

You will understand how neuroscience of imagination, deliberate creation practices, and generative AI tools converge into a new discipline for making ideas real faster.

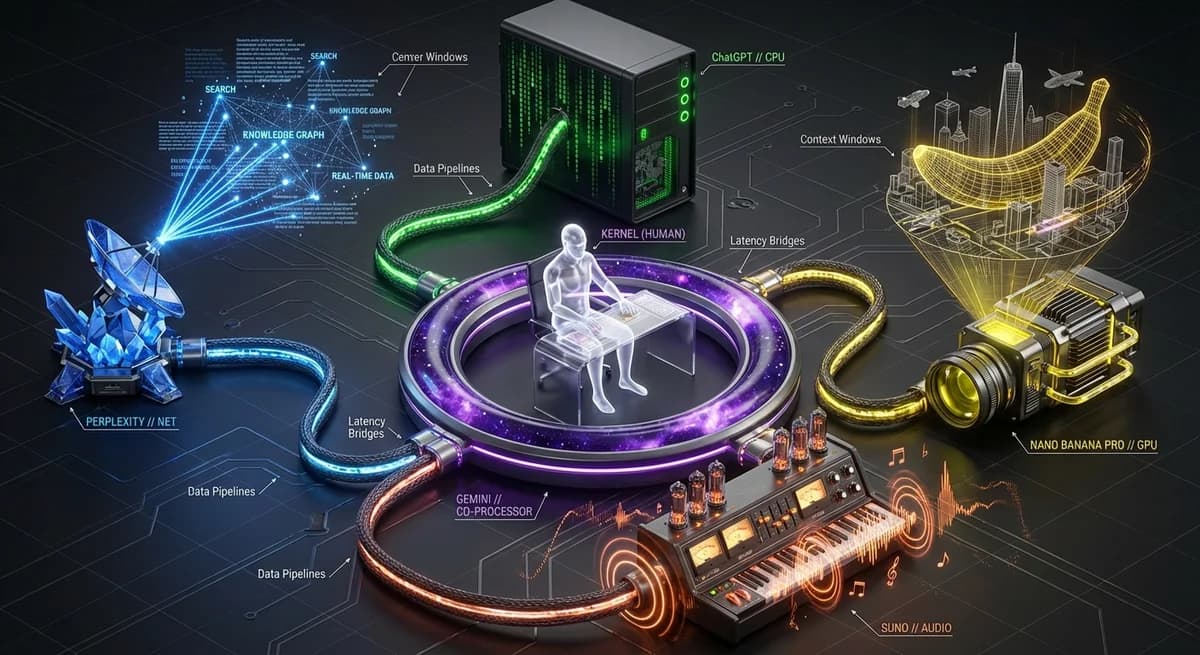

TL;DR: Reality Architecture is the discipline of deliberately designing and building your future using three converging forces — the neuroscience of imagination (your brain treats vivid mental rehearsal as real experience), deliberate creation practices (visualization, structured planning, and mind-mapping measurably increase achievement), and generative AI (which collapses the time between idea and tangible artifact from months to minutes). One person, armed with this framework, can now produce what entire studios required a decade ago. The limiting factor has shifted. It is no longer capability. It is clarity of vision.

The Convergence: Three Fields That Were Separate Are Now One

For most of human history, imagination and reality lived in separate worlds.

You had an idea. Then you spent months — sometimes years — recruiting collaborators, raising capital, acquiring skills, building prototypes, failing, iterating, and eventually arriving at something that resembled your original vision. The gap between "I can see this clearly in my mind" and "this exists in the world" was measured in years and dollars.

Three disciplines developed in parallel to close that gap. Each made progress. None succeeded alone.

Neuroscience of imagination discovered that the brain does not sharply distinguish between vividly imagined experience and lived experience. The same neural circuits fire. The same motor patterns activate. Mental rehearsal builds real skill. This was not philosophy — it was measurable in fMRI scanners and EEG readings.

Deliberate creation practices — structured visualization, journaling, goal-setting, mind-mapping, vision boards — accumulated decades of psychological research showing that people who write specific plans, visualize outcomes with sensory detail, and regularly revisit their intentions achieve measurably more than those who do not.

Generative AI arrived as the third pillar. Text-to-image. Text-to-music. Text-to-code. Text-to-product. For the first time in history, mental models could be rendered into tangible artifacts in seconds. The imagination renderer had been built.

Together, they form something new: Reality Architecture — the discipline of deliberately constructing your desired future using the full stack of what we now know about how brains create, how intention shapes behavior, and how AI accelerates materialization.

The Neuroscience Foundation: Why Imagination Is Practice

Mental Rehearsal Builds Real Neural Pathways

In 1995, neuroscientist Alvaro Pascual-Leone conducted a landmark study at Harvard Medical School. He divided participants into three groups: one group physically practiced a five-finger piano exercise for two hours a day over five days. A second group mentally rehearsed the same exercise — sitting at a piano but only imagining the finger movements. A third group did nothing.

The results were striking. Brain scans showed that both the physical practice group and the mental rehearsal group developed measurable changes in motor cortex representation. The mental rehearsal group showed roughly two-thirds the cortical reorganization of the physical practice group.

Imagination, performed with sufficient vividness and focus, builds the same neural architecture as physical experience.

The Reticular Activating System: Why Writing Plans Works

Dr. Gail Matthews at Dominican University of California ran a study on goal achievement across 267 participants. People who wrote their goals down were 42% more likely to achieve them than those who did not.

The mechanism is the reticular activating system (RAS) — a bundle of nerves in the brainstem that acts as the brain's attention filter. When you write a specific, detailed goal — and especially when you revisit it repeatedly — you are programming the RAS to surface relevant opportunities, resources, and patterns.

Dr. Andrew Huberman's work on neuroplasticity reinforces this: focused attention combined with emotional engagement is the signal that tells the brain "this matters, consolidate it." Visualization without emotional engagement is significantly less effective.

Joe Dispenza and the Mind-Body Loop

Dr. Joe Dispenza's research sits at the intersection of neuroscience and epigenetics. His core thesis, supported by studies on thousands of meditators, is that the body cannot distinguish between a vividly imagined experience and an actual one — and that this has measurable biological consequences.

Participants who engaged in intensive mental rehearsal of elevated emotional states showed measurable changes in gene expression, immune markers, and brain coherence as measured by EEG.

The engineering implication: how you spend mental attention is not neutral. You are either reinforcing existing patterns or encoding new ones.

Mirror Neurons and Embodied Cognition

When you draw a system diagram, sketch an interface, or map the architecture of an idea, you are engaging embodied cognition. The physical act of externalizing a mental model reinforces neural encoding in ways that pure internal visualization does not. This is why the best architects, engineers, and designers sketch obsessively.

The AI Acceleration Layer: Collapsing the Gap

Before and After

Before AI: Idea → rough sketch → recruit designer → 3 rounds of revision → recruit developer → 3 months of build → maybe it resembles the original vision. Total time: 6-18 months.

After AI: Idea → prompt → rendered image in 30 seconds → iterate 10 variations in 5 minutes → music in 2 minutes → landing page in 20 minutes → working prototype in 4 hours → product shipped in 1 day. Total time: hours to weeks.

Generative AI as Imagination Renderer

Generative AI takes a mental model — expressed as a prompt — and produces a visual, audio, code, or text artifact. The machine externalizes the internal.

This matters because seeing your idea rendered changes your relationship to it. It triggers the RAS in new ways. It reveals gaps in your thinking that pure internal visualization conceals.

A vague vision produces a vague render. A precise vision produces something you can evaluate and iterate. The quality of your prompts reflects the quality of your thinking.

Iteration Speed Changes Everything

Thomas Edison's laboratory ran approximately 10,000 experiments before arriving at a working lightbulb filament. Each experiment took days.

With generative AI, the iteration cycle for visual, audio, and code artifacts has collapsed to seconds. I have generated and evaluated 50 visual variations of a concept in the time it previously would have taken to brief a designer.

What This Looks Like at Scale

Since integrating generative AI fully into my creation process:

- 12,000+ songs produced on Suno AI

- 525+ visual assets generated with Gemini and Midjourney

- A complete production website — frankx.ai — built with Claude Code

- 170+ pages of written content across books, courses, and frameworks

- The ACOS framework — a complete agentic creator operating system

One person, operating with clear vision and the right tools, can now produce at studio scale.

The Reality Architecture Framework: A 5-Phase Model

Phase 1: IMAGINE

Neuroscience-backed mental rehearsal — structured journaling, visualization with sensory and emotional specificity, and meditation.

In practice: Spend 15-20 minutes writing your vision with maximum specificity. Not "I want to launch a course." Instead: "On October 15, 2026, I will send the launch email for the ACOS Practitioner Certification. The course has 8 modules, 400 enrolled students in the first cohort..."

Key principle: Add emotion. The feeling of the future state — not just the image of it — drives neural encoding.

Phase 2: MAP

Externalizing the mental model into visible structure — system diagrams, mind maps, architecture blueprints.

In practice: Ask Claude to generate a Mermaid diagram from a prose description. Use Figma for UI flows. Whiteboard for spatial thinking. The act of drawing and mapping encodes ideas more deeply than pure visualization.

Phase 3: RENDER

Using generative AI to produce visual, audio, and video representations. Making the invisible visible.

In practice: Generate multiple versions. Five visual representations in Midjourney. Three musical themes in Suno. A rough video walkthrough in Runway. The goal is rapid iteration toward the version that resonates with your Phase 1 vision.

Key principle: Prompt precision reflects thinking precision. If your renders are vague, the vision is not yet clear enough.

Phase 4: BUILD

Using agentic AI to construct the actual product, system, or experience.

In practice: Claude Code for development. n8n for automation workflows. ACOS for orchestrating multi-step production. Vercel for deployment.

Key principle: Do not start Phase 4 without completing Phases 1-3. The preparation eliminates wasted time in execution.

Phase 5: ITERATE

Rapid feedback loops using AI-assisted analysis, user data, and structured reflection.

In practice: Analytics on every page. A/B tests on key conversions. Ask Claude to analyze patterns and generate improvement hypotheses. Build a weekly review ritual.

Tools for Each Phase

| Phase | Tools | Purpose |

|---|---|---|

| IMAGINE | Claude (journaling prompts), Obsidian, meditation apps | Mental clarity, vision specificity |

| MAP | Mermaid.js, Figma, Miro, Excalidraw | Visible structure, gap identification |

| RENDER | Midjourney, Gemini, Suno, Runway, ElevenLabs | Artifact generation, rapid iteration |

| BUILD | Claude Code, ACOS, n8n, Vercel, Cursor | Construction, deployment |

| ITERATE | Vercel Analytics, PostHog, Langfuse, Claude | Feedback loops, compound improvement |

The New Era

The gap between imagination and reality has never been smaller. The limiting factor has shifted from capability to clarity of vision.

AI tools are extraordinarily capable execution engines. But they require clear direction. They amplify what is already in the prompt. This means the work of imagination — the neuroscience-backed practice of building vivid, specific, emotionally engaged mental models — has become more valuable, not less.

The return on clarity has increased.

One person, operating with strong creative vision and the Reality Architecture framework, can now produce at the scale of a studio. The leverage is real. The tooling is available. The framework is here.

Your First Reality Architecture Session (60 Minutes)

Step 1: Write (10 min)

Journal your clearest possible vision for a specific project. Maximum specificity. Do not edit as you write.

Step 2: Map (10 min)

Paste your writing into Claude: "Generate a structured mind map in Mermaid format showing main components, relationships, and sequence. Identify gaps."

Step 3: Render (15 min)

Generate 5 visual representations using your map as reference. Different angles: hero visual, user experience, architecture diagram, brand identity, future state.

Step 4: Select (10 min)

Which render resonates most with your Phase 1 vision? Write what it captures correctly and what it misses.

Step 5: Plan (15 min)

Return to Claude with all materials: "Generate a 30-day implementation roadmap with weekly milestones, appropriate AI tools per phase, and three Day 1 tasks."

Going Deeper

- Research: Reality Architecture — The deeper academic and empirical foundation

- GenCreator Framework — The full system for AI-native creative production

- ACOS: Agentic Creator OS — The operational layer for Phase 4 (BUILD)

- Prompt Library — Tested prompts for Suno, Midjourney, Claude — precision instruments for Phase 3

- Personal AI CoE — The infrastructure framework behind Reality Architecture

FAQ

Is Reality Architecture just visualization with extra steps?

Visualization is one component of Phase 1. Reality Architecture is a full operational framework spanning from mental clarity through shipped product. The key distinction is that generative AI collapses the execution gap. Imagination without execution is incomplete.

What neuroscience supports this?

Three key findings: (1) Pascual-Leone's 1995 study showing mental rehearsal creates the same cortical changes as physical practice, (2) Dr. Gail Matthews' study showing writing goals increases achievement by 42%, and (3) reticular activating system research showing clear mental models filter attention toward relevant opportunities.

Do I need to be technical for the BUILD phase?

Claude Code and similar agentic AI tools are increasingly accessible to people without engineering backgrounds. The key is clear communication of requirements — which comes from strong Phase 1 and Phase 2 work.

How does this relate to ACOS and the Personal AI CoE?

ACOS is the BUILD phase implementation. The Personal AI CoE is the infrastructure framework. Reality Architecture is the overarching discipline connecting vision (neuroscience) to execution (AI systems). They are complementary layers of the same system.

What is the most common mistake?

Skipping Phase 1 and treating AI tools as a shortcut to clarity. Generative AI amplifies the quality of your thinking — it does not substitute for it. The 20-30 minutes in Phases 1-2 typically saves 10-20 hours in Phase 4.

How long until results?

A solo content product can move through all five phases in a week. A complex software system takes 4-8 weeks. Most practitioners report 60-80% reduction in time from idea to shipped artifact.

Get Started

Build your first AI system

Step-by-step guide to setting up ACOS, creating your first agent, and shipping real products with AI.

Start buildingTemplates & Blueprints

Production-ready architecture

Download AI architecture templates, multi-agent blueprints, and prompt engineering patterns.

Browse templatesInner Circle

Join the builder community

Connect with creators and architects shipping AI products. Weekly office hours, shared resources, direct access.

Join the circleRead on FrankX.AI — AI Architecture, Music & Creator Intelligence

Stay in the intelligence loop

Weekly field notes on AI systems, production patterns, and builder strategy.

Continue Reading

Intelligence Dispatches13 min read

AI Video Generation in 2026: Runway, Kling, Veo 3, and What Happened to Sora

Every major AI video tool compared — quality, speed, pricing, and which to use. Updated after Sora's shutdown March 24, 2026.

Read article

AI & Creativity6 min read

AI Doesn't Have To Be Soulless (And Here's How I Know)

A 15-year architect's turning point: how collaborative creation with AI revealed a path where technology amplifies human creativity, not replaces it.

Read article

Framework3 min read

The Creative Frequency Framework: How to Find Your Authentic Creative Voice in the AI Age

Everyone has a unique creative frequency — a way of expressing that's authentically yours. Here's how to discover yours and use AI to amplify it, not mask it.

Read article