How I Built a Research System with n8n and Claude

Creator SystemsMarch 23, 202610 min read

How I Built a Research System with n8n and Claude

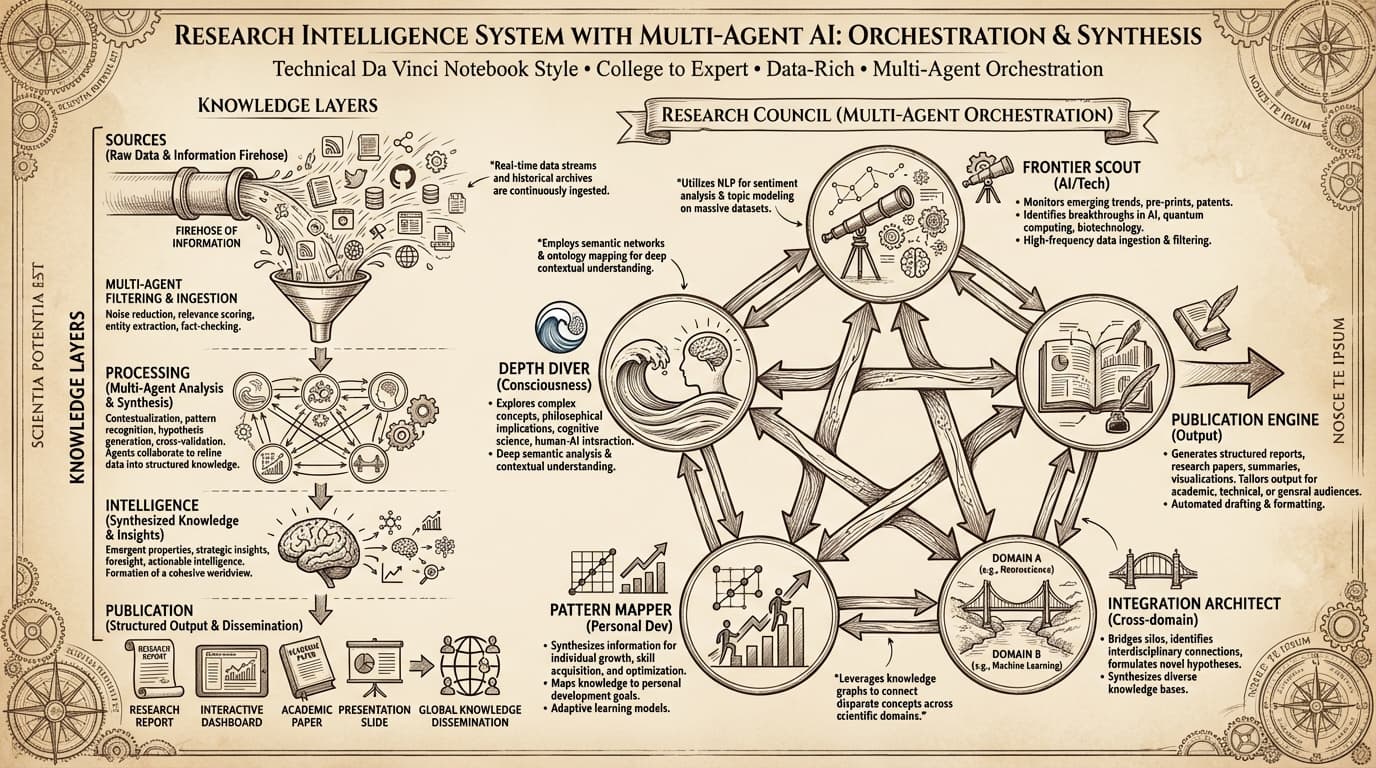

The automated research pipeline that reads papers, synthesizes findings, and builds a searchable knowledge base — with 9 n8n workflows.

🎯

Reading Goal

You will know how to build an automated research pipeline that gathers, synthesizes, and organizes information — using n8n and Claude.

TL;DR: Four n8n workflows, one Railway instance, $5/month. My research intelligence system monitors 30+ RSS feeds, curates AI papers with relevance scoring, synthesizes weekly findings with Claude into structured briefs, and saves everything to Notion. After 4 months running unattended, it feeds directly into every blog post, product decision, and coaching conversation. This is the exact architecture — nodes, prompts, and lessons learned.

Why I Built a Research System Instead of Reading More

In early 2024 I was reading too much and retaining too little. Newsletters, arXiv papers, Substack threads, Twitter threads — hours per week going in, almost nothing actionable coming out.

The problem was not a lack of information. It was the absence of a system that forced synthesis.

Reading is passive. Synthesis is active. My brain was skimming instead of building a knowledge base.

So I built one. An automated research intelligence system running on n8n that does the reading, does the synthesis, and surfaces only what matters — in a format I can act on.

Here is the full architecture.

The Four-Workflow Pipeline

The system breaks into four n8n workflows, each with a specific job. They run in sequence across the week, each feeding the next.

Workflow 1: RSS Monitor

5 nodes | Every 4 hours

This is the intake filter. It watches 30+ RSS feeds across AI research (arXiv AI/ML categories, Anthropic blog, OpenAI research, DeepMind publications), creator tools (n8n blog, Vercel changelog, Notion updates), and enterprise AI (Oracle AI, McKinsey tech insights, Gartner AI).

Every 4 hours it fetches new items, runs each title and summary through a relevance scoring node, and filters to items scoring 8/10+. Lower-scoring items are discarded. High-scoring items go to Notion as "Research Inbox" entries with the source, URL, summary, and relevance score attached.

The scoring prompt is precise:

Rate this article's relevance to an AI Architect who creates content

about personal AI systems, enterprise AI, and creator tools.

Score 1-10. Return JSON: {"score": 7, "reason": "Covers..."}.

Article: {title} — {summary}

Gemini Flash Lite handles this step. It runs fast enough that the 4-hour cycle never backs up.

What it prevents: Reading every headline manually. The 8/10 threshold means I see 3-5 items per day instead of 50+.

Workflow 2: Morning Intelligence Brief

7 nodes | Daily at 7:00 AM UTC

Takes yesterday's Notion Research Inbox entries (8+ score) and builds a 5-bullet morning brief. One bullet per topic cluster: AI models, tooling, enterprise, creator tools, and wild cards.

The Claude synthesis prompt for this step:

You are a research synthesis assistant for Frank Riemer, AI Architect.

Review these research items from the last 24 hours.

Identify 5 distinct themes (not 5 random items — themes that group items).

For each theme: one sentence on what happened, one sentence on what it means.

Prioritize signal over comprehensiveness.

Items: {research_items}

Output goes to Slack #morning-brief. I read it in 90 seconds before my first meeting.

The key design decision: Claude, not Gemini, for this step. Gemini Flash Lite scores relevance fast. Claude synthesizes meaning. Different tasks, different models.

Workflow 3: Strategic Intelligence Brief

9 nodes | Sundays at 8:00 PM UTC

Weekly deep synthesis. Pulls the entire week's Research Inbox from Notion, runs a longer Claude analysis, and produces a structured weekly brief with four sections:

- Trend signals — Directional shifts that appeared across multiple sources this week

- Competitive intelligence — What others shipped, what it signals

- Opportunity surface — Where gaps exist I could address with content or products

- Action items — Specific things worth doing based on this week's intelligence

This brief saves directly to Notion Intelligence Vault — a separate database from the Research Inbox. The Vault is the searchable knowledge base. Every week of briefs accumulates there.

The Notion schema for each brief:

| Field | Type | Purpose |

|---|---|---|

| Week | Date | Week starting date |

| Trend signals | Rich text | Directional shifts |

| Competitive intel | Rich text | What others shipped |

| Opportunity surface | Rich text | Gap analysis |

| Action items | Rich text | Specific next actions |

| Source count | Number | Items synthesized |

| Blog seeds | Multi-select | Topics worth writing |

After 4 months, the Vault holds 16 weekly briefs. Searching it is faster than trying to remember.

Workflow 4: Research Intelligence Hub

8 nodes | Webhook: POST /research

The on-demand layer. When I need research on a specific topic — say, I'm writing about MCP server architecture and want to know what the field has produced in the last 30 days — I POST a query to this webhook.

The workflow:

- Receives

{query: "MCP server patterns 2026", depth: "deep"} - Searches Notion Intelligence Vault for related prior briefs

- Fetches fresh results from configured sources

- Claude synthesizes: prior knowledge + fresh findings → structured brief

- Returns JSON brief and saves to Vault

This is where the research system becomes a genuine knowledge base rather than just a feed aggregator. Prior briefs inform new synthesis. The system remembers.

The Mega Orchestrator routes RESEARCH-intent commands to this webhook — meaning I can trigger a research query from Slack, my website admin panel, or Claude Code directly.

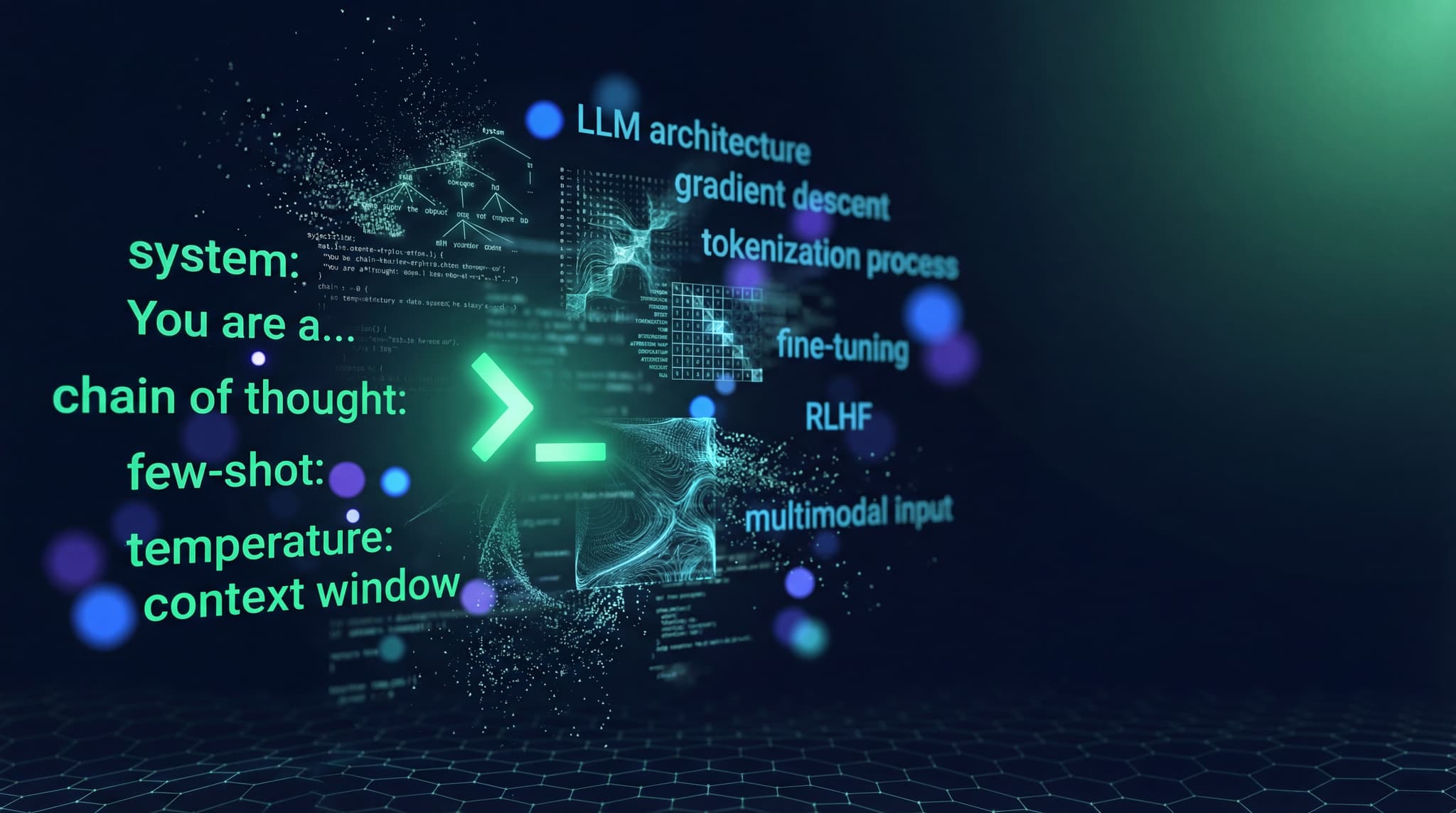

How Claude Does the Synthesis

The quality of the briefs depends entirely on the synthesis prompt. I went through four iterations before the output was genuinely useful.

Version 1 (discarded): "Summarize these articles." Output: a list of article summaries. Not synthesis. Useless.

Version 2 (better): "Identify themes and explain what they mean." Output: themed groupings, but too academic. No actionability.

Version 3 (good): "Identify themes, explain what they mean, and suggest what I should do." Output: useful, but Claude would suggest arbitrary actions without knowing my specific context.

Version 4 (production): Context injection changes everything.

You are synthesizing research for Frank Riemer.

Context: AI Architect at Oracle EMEA. Creator of ACOS personal AI system.

Audience: Publishes for individual creators and professionals building personal AI stacks.

Products: ACOS framework, prompt library, research hub at frankx.ai.

Given these research items from this week:

{items}

Produce a structured brief:

1. TREND SIGNALS: 2-3 directional shifts, one sentence each

2. COMPETITIVE INTEL: What notable AI tools or frameworks shipped

3. OPPORTUNITY SURFACE: Where is there a gap I could address?

4. ACTION ITEMS: 3 specific, concrete actions worth considering

Be precise. Ground everything in the research items provided.

The shift from Version 3 to 4: telling Claude who I am and what I create. Without that, the suggestions are generic. With it, they reference specific gaps in my content catalog or product suite.

The Notion Database Structure

Two databases power the system:

Research Inbox — High-velocity, volatile. Items flow in daily and get archived weekly. Schema: source, URL, title, summary, relevance score, date, status (New / Reviewed / Archived).

Intelligence Vault — Low-velocity, permanent. Weekly briefs plus on-demand research outputs. The Blog seeds multi-select field tags topics worth writing. I review the Vault every Monday and pick seeds for the week's content.

From Research to Content: The Flywheel

The research system does not exist in isolation. It feeds three downstream outputs:

Blog content. Blog seeds from the Vault become article outlines. The Strategic Brief becomes the "why now" framing in each post. The specific research items become citations and examples. My n8n automation guide started as a Vault entry tagged "n8n creator patterns — gap in existing content."

Product decisions. The Opportunity Surface section surfaces gaps in my product catalog. The ACOS framework FAQ was updated three times based on questions surfacing in research about what creators actually struggle with. Those updates came from the Vault, not from guessing.

Coaching conversations. When a coaching client asks about a specific topic, I search the Vault first. Instead of recalling from memory, I pull structured synthesis with sourced claims. The answers are better. See the full personal AI CoE framework for how research infrastructure fits into a broader system.

The research system makes everything else better because it externalizes knowledge into a searchable format rather than relying on recall.

Cost Breakdown

Infrastructure: $5/month n8n on Railway. Notion free tier handles the database. Claude API for synthesis averages roughly $0.30/week at current token pricing — the Strategic Brief runs about 3,000 tokens per synthesis.

Time recovered: 8-10 hours per week previously spent manually reading sources, deciding what is relevant, and trying to remember what I read.

The compounding effect: The system catches patterns across weeks that manual reading misses. The shift from GPT-4 dominance to Claude 3.5 as the practitioner-preferred model was visible in the briefs three weeks before I consciously noticed it. Temporal pattern recognition — spotting directional change across time — is what the Vault is actually for.

Three Honest Limitations

Primary source reading remains irreplaceable. The summaries are good enough for awareness, not deep expertise. When I need genuine fluency in a topic, I still read the source material. The system tells me what to read deeply, not the deep reading itself.

Relevance scoring drifts. My interests shift faster than the scoring prompt. Every 6-8 weeks I update the prompt to reflect current priorities. This is maintenance the system cannot automate.

Synthesis quality depends on source quality. When a week's RSS intake is mostly press releases, the briefs are thin. Curating the RSS list is ongoing work. The system amplifies source quality — it does not compensate for poor sources.

Building Your Own: The Minimum Viable Version

Start with two workflows:

- RSS Monitor — 4-hour cycle, relevance scoring, save 8+ items to Notion

- Weekly Brief — Pull the week's items, Claude synthesis, deliver to Slack

Add the Strategic Intelligence layer once you have 4+ weeks of data accumulating. Add the on-demand Research Hub when you find yourself wanting to query specific topics regularly.

The full stack — n8n on Railway, Notion free tier, Claude API — runs under $15/month total.

The prompt library has the synthesis prompts formatted for direct use. The ACOS framework shows how a research system fits into a complete personal AI architecture.

FAQ

What RSS feeds should I start with? Start narrow. Five to eight sources in your specific niche rather than 30 broad ones. Relevance scoring is a filter, not a replacement for source quality. For AI practitioners: arXiv cs.AI, the Anthropic blog, Simon Willison's blog, the Vercel changelog, and one or two topic-specific newsletters.

Can I use a different model instead of Claude for synthesis? Yes. Gemini Flash handles relevance scoring well at lower cost. Claude's advantage surfaces in synthesis — it maintains coherence across 50+ research items in a single prompt better than most alternatives. For daily briefs, GPT-4o mini works. For the Strategic Brief, use the best model you can afford.

How do I handle the system surfacing too much? Raise the relevance threshold from 8 to 9. Cut RSS sources by half. The correct calibration: you read every morning brief fully without skimming. If you are skimming, the filter is too loose.

Does the Notion free tier have limits that affect this system? The main constraint is the API rate limit (3 requests/second) and block storage. With daily intake of 3-5 items and weekly synthesis, the free tier handles it comfortably. You hit limits only at high-frequency monitoring across hundreds of sources.

How long before the system produces genuinely useful output? The daily brief is useful from week one. The Strategic Brief becomes insightful around week six, when there is enough prior context for Claude to detect directional change rather than just summarizing the current week. The Intelligence Vault compounds — patience on that layer pays off.

Get Started

Build your first AI system

Step-by-step guide to setting up ACOS, creating your first agent, and shipping real products with AI.

Start buildingTemplates & Blueprints

Production-ready architecture

Download AI architecture templates, multi-agent blueprints, and prompt engineering patterns.

Browse templatesInner Circle

Join the builder community

Connect with creators and architects shipping AI products. Weekly office hours, shared resources, direct access.

Join the circleRead on FrankX.AI — AI Architecture, Music & Creator Intelligence

Stay in the intelligence loop

Weekly field notes on AI systems, production patterns, and builder strategy.

Continue Reading

Intelligence Dispatches11 min read

Claude, GPT, Gemini, DeepSeek: Which Model for Which Task?

Every major frontier model compared — architecture, capabilities, pricing, and which to use for coding, research, creative work, and enterprise deployment.

Read article

Creator Systems6 min read

n8n Automation: 9 Workflows That Run My Creative Empire

Production n8n workflows for content atomization, newsletter automation, music catalog sync, research intelligence, and AI-orchestrated publishing pipelines.

Read article

Intelligence Dispatches13 min read

Prompt Engineering in 2026: What Still Works

The prompting techniques that survived the model upgrades — structured output, chain-of-thought, few-shot, and what to stop doing.

Read article