Claude, GPT, Gemini, DeepSeek: Which Model for Which Task?

Intelligence DispatchesMarch 31, 202613 min read

Claude, GPT, Gemini, DeepSeek: Which Model for Which Task?

Every major frontier model compared — architecture, capabilities, pricing, and which to use for coding, research, creative work, and enterprise deployment.

🎯

Reading Goal

You will understand every major frontier model family — their architecture, sweet spots, and which to choose for different tasks.

Updated March 31, 2026: Model pricing, specs, and availability refreshed. Added GPT-5, DeepSeek V3.2, Gemini 3 Pro, and Grok 4.1.

TL;DR: The frontier model landscape in 2026 is no longer a two-horse race. Six distinct model families now compete at the top — each with genuine architectural advantages and real tradeoffs. Claude Opus 4.6 leads in agentic coding and long-context reasoning. GPT-4o and o3 dominate multimodal benchmarks and enterprise integrations. Gemini 2.5 Pro brings 1M+ token context with native Google grounding. DeepSeek's open-weight R1 and V3 shattered the cost-per-token curve. Meta's Llama 4 powers the open-source fine-tuning ecosystem. Mistral anchors European data sovereignty. The right model depends on your task — and the best practitioners use several simultaneously.

Why 2026 Is Different

A year ago, "best AI model" was a simpler question. Today it depends on whether you mean coding, research synthesis, image understanding, long documents, cost-sensitivity, or open-weight deployability. Each dimension has a different answer.

Three structural shifts define 2026:

Reasoning became mainstream. Chain-of-thought and extended thinking are no longer premium features — they ship in mid-tier model variants.

Context exploded past practical limits. 1M-token context windows exist. The harder problem is whether models actually use that context faithfully.

Open-weight models reached near-frontier performance. DeepSeek V3 and Llama 4 perform within a narrow band of GPT-4o on many benchmarks, at a fraction of the API cost.

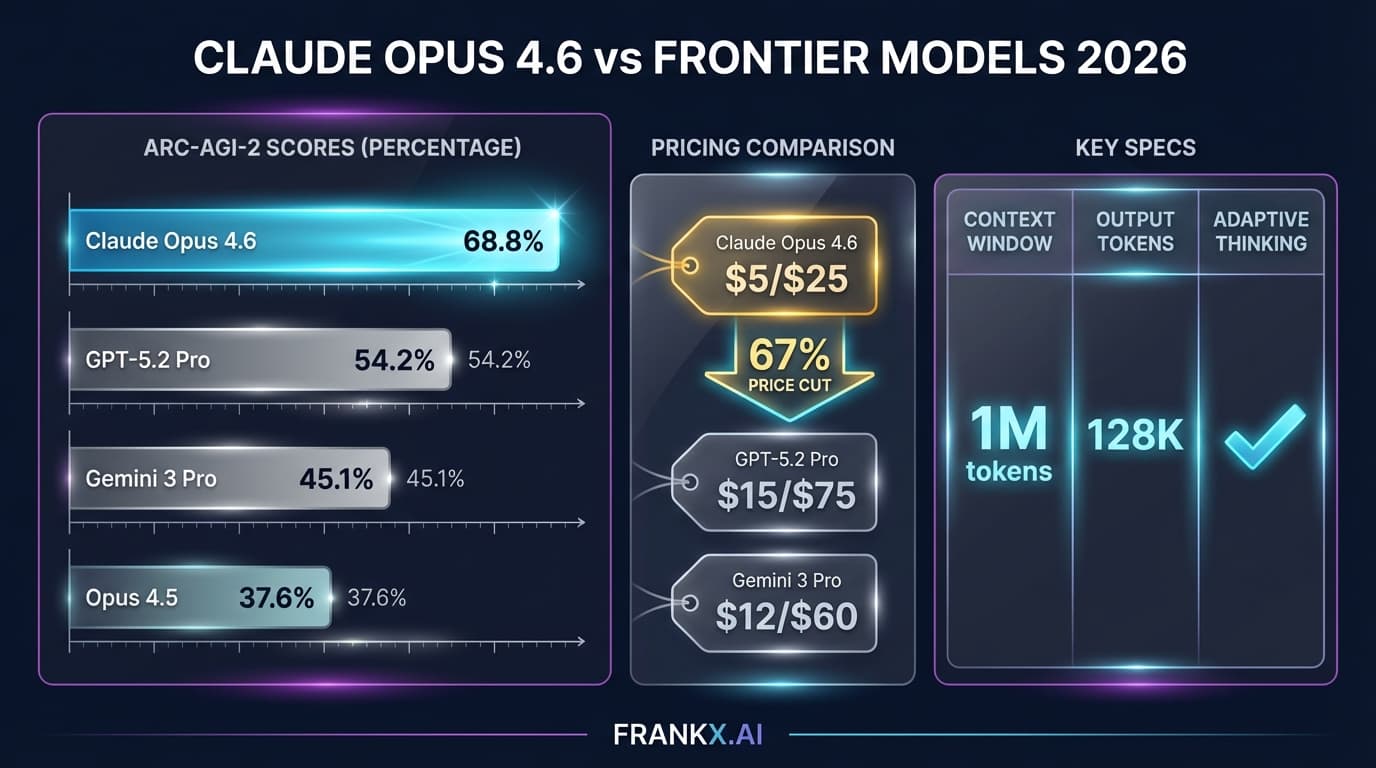

Anthropic Claude — Opus 4.6, Sonnet 4.6, Haiku 4.5

Claude is Anthropic's flagship model family, built around constitutional AI training that prioritizes interpretability, safety, and reasoning depth.

Claude Opus 4.6

The ceiling of the Claude family — designed for tasks where quality matters more than speed. Extended thinking mode allows multi-step reasoning chains that surface before the final answer, making it possible to verify logic rather than just the output.

Context window: 200K tokens standard, 1M with extended context. Opus handles the full 200K faithfully — document retrieval at position 180K is nearly as accurate as position 5K.

Coding is where Opus earns its premium. Claude Code — Anthropic's agentic coding environment — handles multi-file refactors, dependency management, and test generation with consistency that shorter-context models cannot match.

Tool use and MCP (Model Context Protocol) are native to Claude. Opus connects to external systems through a structured protocol designed to be composable and auditable.

Sweet spot: Complex agentic workflows, large codebase work, research synthesis, any task where extended thinking produces verifiably better outputs.

Claude Sonnet 4.6

The balance point. Most of Opus's capability at significantly lower cost and latency. The practical default for most production deployments. For content generation, analysis, coding assistance, and document work, Sonnet is where most teams spend the majority of their tokens.

Claude Haiku 4.5

Speed and efficiency — sub-second responses, low cost. Handles classification, summarization, extraction, and lightweight generation. Improved substantially on instruction following compared to its predecessor.

Pricing: Haiku ~$1/M input, $5/M output. Sonnet ~$3/M. Opus ~$15/M.

For a deeper dive: Claude Opus 4.6 analysis.

OpenAI — GPT-5, GPT-4o, o3, o4-mini

OpenAI's lineup bifurcated into two tracks: the GPT series for multimodal general intelligence, and the o-series for explicit reasoning chains. GPT-5 now sits at the top of the family.

GPT-5 and GPT-5.2

OpenAI's latest flagship. GPT-5 unifies the GPT and o-series lines into a single model that handles both multimodal tasks and extended reasoning natively. GPT-5.2 refines this further with improved tool use and agentic reliability. Context window: 256K tokens. For teams already deep in the OpenAI ecosystem, GPT-5 is the new default for high-capability workloads.

GPT-4o

Remains a strong option at lower cost. Natively multimodal — images, audio, video, and text in a unified architecture. For tasks requiring genuine vision reasoning, 4o's visual grounding remains a benchmark leader. Function calling and structured output reliability are strong. The developer ecosystem around 4o is the most mature of any frontier model.

Context window: 128K tokens. Faithful recall across the full window is solid.

o3 and o4-mini

The o-series introduces explicit extended reasoning — the model allocates compute to a private chain of thought before responding. For mathematical reasoning, complex code generation, and multi-step problem decomposition, o3 performs at a level older GPT variants could not approach.

o4-mini represents the latest iteration on small reasoning models — more capable than o3-mini on most benchmarks while maintaining comparable speed. The practical default for reasoning-at-scale.

Pricing: GPT-5 pricing varies by tier. GPT-4o ~$2.50/M input. o3 significantly higher. o4-mini ~$1.10/M.

Google Gemini — 3 Pro, 2.5 Pro, 2.5 Flash

Google's Gemini family enters 2026 with architectural advantages in specific contexts — particularly anything touching Google's data infrastructure or requiring million-token context. Gemini 3 Pro now leads the lineup.

Gemini 3 Pro

Google's latest generation model with substantially improved reasoning, coding, and multimodal capabilities over 2.5 Pro. Native image generation is a standout feature — Gemini 3 Pro produces high-quality images directly within conversations. Extended context and grounded search carry over from the 2.5 line with improved faithfulness.

Gemini 2.5 Pro

Operates at 1M+ token context natively — and unlike some competitors, Gemini's MoE architecture maintains recall quality across the full window with reasonable faithfulness. For research synthesis tasks — reading entire paper corpora or full codebases — this is the operational advantage.

Grounded search integration is a distinct capability: Gemini can call Google Search natively and synthesize results into responses with citations. For research-heavy workflows, this collapses the gap between "what the model knows" and "what is currently true."

Gemini 2.5 Flash

Speed and cost priority. Strong performance on structured tasks at a fraction of Pro's cost. The production workhorse for high-volume Google AI deployments.

Pricing: Gemini 3 Pro pricing varies by tier. Gemini 2.5 Pro ~$1.25/M input, $10/M output. Flash ~$0.15/M.

DeepSeek — V3.2, R1, V3, and the Open-Weight Disruption

DeepSeek's releases did more to reshape frontier model economics than any other development in the period. The V3.2 release marks a major leap forward.

DeepSeek V3.2 and V3.2-Speciale

A significant upgrade over V3 and R1. V3.2 integrates reasoning (thinking) directly into tool-use workflows — the model can reason through multi-step tool calls without requiring a separate reasoning model. V3.2-Speciale pushes this further with enhanced agentic capabilities and more reliable structured output. Benchmarks place V3.2 competitive with GPT-4o and Claude Sonnet on coding and reasoning tasks.

DeepSeek V3

General-purpose LLM with Mixture-of-Experts architecture — 671B total parameters with ~37B active per forward pass. Near-frontier performance at training costs that triggered industry-wide efficiency reanalysis.

As an open-weight model (downloadable, self-hostable, fine-tunable), V3 enables workloads that closed APIs cannot: air-gapped deployments, custom fine-tuning on proprietary data, and unlimited inference at compute cost rather than per-token pricing.

DeepSeek R1

Reasoning-specialized, analogous to OpenAI's o-series but open-weight. Produces explicit chain-of-thought reasoning traces. Strong on mathematical and logical reasoning. The open-weight nature means researchers can study reasoning traces, fine-tune on domain-specific reasoning, and deploy without per-token costs.

Where DeepSeek fits: Cost-sensitive production. Air-gapped deployments. Research requiring model inspection. V3.2 is now the recommended starting point for new DeepSeek deployments.

Meta Llama 4 — Scout and Maverick

Llama 4 marks the maturation of the open-source frontier.

Llama 4 Scout

Efficiency-optimized — 17B active parameter MoE model with a 10M token context window. The largest context of any publicly available model. For document-scale applications at low cost on commodity hardware, Scout's architecture is distinctive.

Llama 4 Maverick

Scales to 17B active from a 400B total MoE pool. Benchmark performance that competes with frontier models on many tasks. The open-source model that most consistently challenges GPT-4o and Claude Sonnet.

The Llama ecosystem advantage: Community. The broadest fine-tuning ecosystem of any open-weight model family. Hundreds of domain-specific fine-tunes. Mature tooling (LlamaIndex, Ollama, llama.cpp, vLLM).

Mistral — European Sovereignty

Mistral Large competes with GPT-4o-class models, with particular strength in multilingual tasks and European language support. For enterprises with EU data residency requirements, Mistral Large on EU infrastructure is often the only compliant frontier option.

Codestral is Mistral's code-specialized model — competitive with larger general models on pure coding benchmarks, efficient enough for real-time IDE integration.

Also in the Field

Grok 4.1 (xAI): xAI's latest model with substantially improved reasoning and coding over Grok 3. Real-time X/Twitter data integration remains a native capability. DeepSearch mode synthesizes web and X data uniquely. Grok 4.1 is competitive on coding benchmarks and benefits from xAI's growing compute infrastructure.

Qwen 2.5 (Alibaba): Strongest Chinese frontier lab model available outside DeepSeek. Particular strength in Chinese-language and Asian multilingual applications.

The Comparison Table

| Model | Context | Coding | Research | Creative | Enterprise | Price |

|---|---|---|---|---|---|---|

| Claude Opus 4.6 | 200K/1M | ★★★★★ | ★★★★★ | ★★★★ | ★★★★★ | ~$15/M |

| Claude Sonnet 4.6 | 200K | ★★★★ | ★★★★ | ★★★★ | ★★★★ | ~$3/M |

| Claude Haiku 4.5 | 200K | ★★★ | ★★★ | ★★★ | ★★★★ | ~$1/M in, $5/M out |

| GPT-5 | 256K | ★★★★★ | ★★★★★ | ★★★★ | ★★★★★ | Varies by tier |

| GPT-4o | 128K | ★★★★ | ★★★★ | ★★★★ | ★★★★★ | ~$2.50/M |

| o3 | 128K | ★★★★★ | ★★★★★ | ★★★ | ★★★★ | ~$10/M+ |

| o4-mini | 128K | ★★★★ | ★★★★ | ★★★ | ★★★★ | ~$1.10/M |

| Gemini 3 Pro | 1M+ | ★★★★★ | ★★★★★ | ★★★★★ | ★★★★ | Varies by tier |

| Gemini 2.5 Pro | 1M+ | ★★★★ | ★★★★★ | ★★★★ | ★★★★ | ~$1.25/M in, $10/M out |

| DeepSeek V3.2 | 128K | ★★★★★ | ★★★★ | ★★★★ | ★★★ | Self-hosted |

| DeepSeek V3 | 128K | ★★★★ | ★★★★ | ★★★★ | ★★★ | Self-hosted |

| DeepSeek R1 | 128K | ★★★★ | ★★★★★ | ★★★ | ★★★ | Self-hosted |

| Llama 4 Maverick | 1M | ★★★★ | ★★★★ | ★★★ | ★★★ | Self-hosted |

| Grok 4.1 | 128K | ★★★★ | ★★★★ | ★★★ | ★★★ | Varies by tier |

| Mistral Large | 128K | ★★★★ | ★★★ | ★★★★ | ★★★★ | ~$2/M |

| Codestral | 32K | ★★★★★ | ★★★ | ★★ | ★★★ | ~$0.20/M |

How I Use Multiple Models

Running a single model for everything is a beginner pattern. Here is my actual workflow:

Claude Code for development. Claude Sonnet 4.6 through Claude Code is my primary development environment. Long context, extended thinking, and MCP-native tool integration make it the most capable agentic coding environment. For complex architectural decisions, I escalate to Opus.

Gemini 2.5 Pro for deep research synthesis. When I need to read an entire industry report or a set of academic papers simultaneously, Gemini's million-token context is the right tool.

Gemini for image generation. For image generation workflows integrated into content pipelines, Gemini's capabilities route through specific tooling in my stack.

Claude for long-form writing. Blog posts, documentation, course content — Claude Sonnet is my default. Voice is more natural for long-form, instruction following on brand voice guidelines is more consistent.

o3 or DeepSeek R1 for reasoning-intensive analysis. When I need explicit steps I can verify — pricing models, algorithm selection, multi-constraint optimization — I reach for reasoning models.

Llama 4 for experiments requiring full model control. Fine-tuning on proprietary data, sensitive documents that cannot leave local infrastructure — Llama 4 via Ollama.

For ongoing research: State of AI 2026 and Generative AI research hub.

For students building AI fluency: AI Briefing.

The Model Selection Framework

Stop asking "what's the best model?" Start asking "what's the best model for this task at this cost tolerance?"

For coding: Claude Opus/Sonnet via Claude Code. GPT-5 for OpenAI-ecosystem teams. Budget option: o4-mini or Codestral. Self-hosted: DeepSeek V3.2 or Llama 4 Maverick.

For long document research: Gemini 2.5 Pro when >100K tokens. Claude Opus when reasoning quality matters more than raw context.

For creative writing: Claude Sonnet for voice consistency. GPT-4o for diverse style range. Mistral Large for multilingual.

For enterprise with SLA: GPT-4o or Claude Sonnet — most mature API infrastructure and compliance certifications.

For cost-sensitive production: Gemini Flash, Claude Haiku, or o4-mini for API. DeepSeek V3.2 or Llama 4 Scout for self-hosted.

For data sovereignty: Llama 4 Maverick for max capability. Mistral Large for European regulatory contexts.

What Matters More Than Benchmarks

Context faithfulness at depth. Test retrieval at multiple depths in your actual document lengths before committing.

Tool use consistency. A model that calls tools correctly 95% vs 98% creates compounding failure modes in multi-step pipelines.

Instruction following on complex constraints. Format, length, persona, forbidden topics, required inclusions — across long outputs, this degrades substantially in some models.

API reliability. Uptime, rate limits, and predictable latency matter as much as raw capability for production systems.

Cost at your actual consumption. Models with prompt caching often cost less than models with lower list prices, at real-world usage patterns.

FAQ

Which AI model is best for coding in 2026?

Claude Opus 4.6 via Claude Code is the most capable for complex, multi-file agentic coding. GPT-5 is a strong competitor for agentic workflows in the OpenAI ecosystem. For pure code generation speed at lower cost, o4-mini and Codestral are competitive. For self-hosted: DeepSeek V3.2 and Llama 4 Maverick. Most serious developers use at least two models.

Is DeepSeek R1 as good as GPT-4o or Claude?

On reasoning benchmarks — mathematical problem solving, logical deduction — R1 is genuinely competitive. V3.2 narrows the gap further by integrating reasoning into tool-use natively. On general capability, V3.2 is competitive with GPT-4o and Claude Sonnet. The meaningful gap is ecosystem maturity, API reliability, compliance certifications, and tool use consistency.

What does 1M token context actually mean in practice?

Roughly 750,000 words in a single session. The useful limit is governed by faithfulness — whether the model attends to information at all positions equally. Gemini 2.5 Pro and Llama 4 Scout offer the largest windows in their respective categories.

Should I use one model or multiple models?

Multiple, with intention. One primary model for your core workload, one reasoning model for analysis, one low-cost model for high-volume tasks. Three models covers 95% of use cases.

Is Llama 4 good enough to replace commercial API models?

For many use cases, yes — if you have GPU infrastructure and ML ops capacity. Llama 4 Maverick competes with GPT-4o on general benchmarks. The gaps are in instruction following, tool use consistency, and the surrounding ecosystem of integrations.

What is MCP and why does it matter for model selection?

MCP is Anthropic's open standard for connecting AI models to external tools and data sources. Claude models are MCP-native. Other models support function calling, but MCP provides a more standardized interface for building agentic workflows. For enterprise agentic deployments, native MCP support reduces integration complexity.

Get Started

Build your first AI system

Step-by-step guide to setting up ACOS, creating your first agent, and shipping real products with AI.

Start buildingTemplates & Blueprints

Production-ready architecture

Download AI architecture templates, multi-agent blueprints, and prompt engineering patterns.

Browse templatesInner Circle

Join the builder community

Connect with creators and architects shipping AI products. Weekly office hours, shared resources, direct access.

Join the circleRead on FrankX.AI — AI Architecture, Music & Creator Intelligence

Stay in the intelligence loop

Weekly field notes on AI systems, production patterns, and builder strategy.

Continue Reading

Intelligence Dispatches13 min read

Prompt Engineering in 2026: What Still Works

The prompting techniques that survived the model upgrades — structured output, chain-of-thought, few-shot, and what to stop doing.

Read article

Intelligence Dispatches12 min read

DeepSeek R1: What Open-Weight Reasoning Actually Means

The model that disrupted AI pricing. How DeepSeek R1 works, where it excels, and what it means for builders choosing between open and closed models.

Read article