Prompt Engineering in 2026: What Still Works

Intelligence DispatchesMarch 19, 202613 min read

Prompt Engineering in 2026: What Still Works

The prompting techniques that survived the model upgrades — structured output, chain-of-thought, few-shot, and what to stop doing.

🎯

Reading Goal

You will know which prompting techniques still matter in 2026, which ones the models outgrew, and how to get maximum quality from any frontier model.

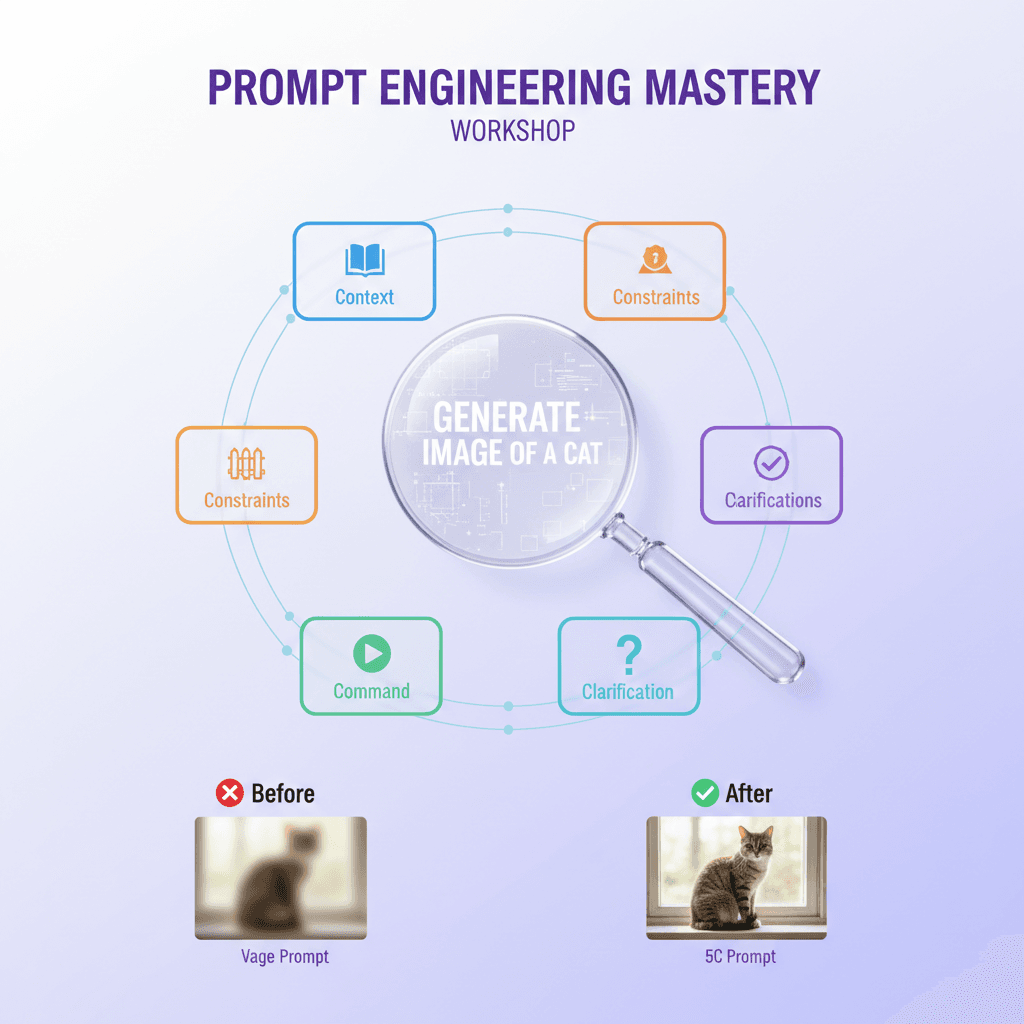

TL;DR: Most prompt engineering advice from 2024 is obsolete. Models got better at following instructions — so tricks that compensated for weak instruction-following are now unnecessary. What still works: structured output schemas, role-specific system prompts, few-shot examples for novel formats, and explicit constraint lists. What died: "think step by step" (models do this by default now), "you are an expert" (models already are), and elaborate persona backstories. This is what actually moves the needle in 2026.

What Actually Changed in the Models

Between mid-2024 and early 2026, frontier models went through a qualitative shift that most prompt engineering guides haven't caught up with.

The shift isn't just raw capability. It's instruction-following fidelity. The gap between what you ask for and what you get closed significantly. Earlier models needed prompting tricks to approximate good behavior. Current models follow explicit instructions with high fidelity — which means tricks that compensated for poor instruction-following became noise.

Here's the concrete change: in 2024, you had to work around the model's tendencies. In 2026, you can write down exactly what you want and get it. That sounds simple. The implication is not simple: every piece of advice built around compensating for a model's bad defaults is now obsolete.

I run a 74-prompt library at frankx.ai/prompt-library that I maintain actively. When I started it in early 2024, about 60% of the prompts included compensatory language — tricks to get the model to behave. Today, those prompts are the weakest ones in the library. The best-performing prompts are the ones that are simply precise and explicit, with no tricks at all.

That's what this article covers: what changed, what survived, and what to stop doing.

Techniques That Died (And Why)

"Think step by step"

This was the most-cited prompting technique of 2023-2024. The idea: adding "think step by step" or "let's think through this carefully" before a request triggers chain-of-thought reasoning that improves output quality.

It worked. It doesn't work anymore — not because the technique was wrong, but because the models internalized it. Frontier models reason step by step by default on non-trivial tasks. Telling them to do what they're already doing adds nothing except token count.

I tested this extensively across Claude 3.7 Sonnet and Claude Opus 4 in late 2025. On complex tasks, outputs with and without "think step by step" were statistically indistinguishable. On simple tasks, the phrase occasionally introduced unnecessary verbosity.

Retired. Move on.

"You are an expert [X]"

Another staple of the 2024 prompting playbook. The theory: declaring the model an expert in a domain primes it to respond with expert-level depth.

The problem: frontier models are already operating from extensive training on expert-level content. Telling Claude "you are an expert software engineer" doesn't add expertise that wasn't there. It's like telling a PhD that they have a PhD before asking them a question.

What does work — and this is a crucial distinction — is specifying the role's task focus and output requirements, not the expertise level. More on this in the system prompts section below.

Elaborate persona backstories

"You are Alex, a 15-year veteran of enterprise software architecture who has shipped 40+ production systems across Fortune 500 companies and believes deeply in clean code principles..."

I've seen prompts with backstories this long. The backstory adds almost nothing to output quality. The model doesn't become more capable by being given a biography. What it does do: increase token usage, add prompt fragility (the model has to track a complex persona), and obscure the actual instructions buried beneath the backstory.

The one thing persona backstories could provide — consistent voice — is now better achieved with explicit style instructions and few-shot examples.

Excessive politeness and filler

"Please could you help me with this? I would really appreciate if you could..." — this adds nothing to output quality and is frequently cargo-culted from early prompting guides that were written when model behavior was more sensitive to politeness framing.

Cut it. Models respond to precision, not politeness.

Repetition for emphasis

"This is very important. I cannot stress enough how important this is. Please make sure you..."

Repeating constraints doesn't make them more likely to be followed. If a constraint isn't being followed, the issue is usually that it's buried in prose rather than structured explicitly. Repetition compounds that problem.

Techniques That Still Work

Structured output with explicit schemas

This is the most consistently high-value technique across every model I've tested.

Instead of asking for output in prose and hoping the model structures it correctly, define the exact structure you want before the content. For JSON:

Return a JSON object with exactly these fields:

{

"title": "string, max 60 characters",

"description": "string, 120-160 characters",

"tags": ["array of exactly 5 strings"],

"category": "one of: Creator Systems | Intelligence Dispatches"

}

Do not include any other fields. Do not add explanation outside the JSON block.

This works because it converts a vague instruction ("give me structured output") into an unambiguous specification. The model knows exactly what to produce. Validation is straightforward. Integration into downstream systems is reliable.

I use this pattern across the ACOS skill system. Every skill file that generates structured data includes an explicit schema. The output consistency is dramatically better than prose-then-parse approaches.

For non-JSON structures, the same principle applies: define the exact sections, lengths, and format before asking for content.

Role-based system prompts with task focus

"You are an expert X" is dead. Role-based system prompts are very much alive — but the key is specificity about what the role does, not what it knows.

Weak: "You are an expert content strategist."

Strong:

You are a content strategist reviewing blog posts for publication on frankx.ai.

Your job:

- Check that the title is ≤60 characters

- Verify the description is 120-160 characters

- Confirm exactly 5 tags are present

- Flag any banned words: landscape, comprehensive, delve, dive into

- Report: PASS or FAIL with specific issues listed

The strong version specifies the task, the criteria, and the output format. The model knows exactly what to check and how to report it. Expertise is implicit in the capability; task focus is what you need to specify.

This is the structure I use for every skill in the ACOS system. Each skill is essentially a production-grade system prompt that defines a specific agent's role and task focus with precision. The 22+ skills in ACOS v10 are the result of iterating on this approach for 18 months.

Few-shot examples for novel formats

When you need output in a format the model hasn't encountered often — a specific MDX structure, a proprietary data format, an unusual report layout — few-shot examples are still the most reliable technique.

The model is pattern-matching. If you give it three examples of the exact format you want, it pattern-matches to that format. This works better than extensive description of the format.

Important: the examples need to be high-quality. One excellent example is more valuable than three mediocre ones. The model will pattern-match to quality, not just structure.

For the frankx.ai blog content pipeline, the /frankx-ai-blog slash command includes two example frontmatter blocks and one example section structure. Output quality with those examples versus without is noticeably higher, particularly for maintaining the exact character counts in title and description.

Explicit constraint lists

Constraints work better when they're itemized, not embedded in prose.

Prose constraint:

"Please make sure the title isn't too long, avoid using jargon that's too technical for general audiences, and try not to use passive voice if possible."

Three problems with this: "too long" is undefined, "too technical" is subjective, and "if possible" signals the constraint is optional.

Explicit constraint list:

CONSTRAINTS (non-negotiable):

- Title: ≤60 characters (count each character, including spaces)

- Technical terms: define on first use

- Voice: active only — rewrite any passive constructions

- Banned words: landscape, comprehensive, delve, dive into

The difference in compliance between these two formulations is measurable. Itemized, specific, labeled as non-negotiable: models follow these with high fidelity. Embedded prose with hedging: treated as preferences.

This principle applies to any constraint. Define it precisely. Separate it from the main instruction. Label it clearly.

Temperature as a precision tool

Temperature is underused by most people and misunderstood by most guides.

The mental model: temperature controls how the model samples from its probability distribution at each token. Low temperature (0.0-0.3) means the model reliably picks the highest-probability next token — consistent, predictable, precise. High temperature (0.8-1.2) means the model samples more randomly — more varied, potentially more creative, also more likely to drift from constraints.

For structured output with strict formatting requirements: temperature 0.0-0.2. Consistent, reliable, validates predictably.

For creative generation where variation is valuable: temperature 0.7-0.9. Different outputs on each run, more unexpected word choices.

For technical writing that needs to be accurate but not robotic: temperature 0.3-0.5. Precise enough to stay on specification, varied enough to not read like a template.

I set temperature explicitly for every production prompt in the ACOS skill library. The default (usually 1.0 in most APIs) is appropriate for general conversation but suboptimal for structured production tasks.

Negative space constraints

This is subtle but consistently effective: define what you don't want alongside what you do want.

Not "write a confident introduction" — write "write a confident introduction that leads with a concrete result, not a question and not a broad claim about the industry."

The negative constraint anchors the model away from the most common failure modes. It's particularly useful for tone and style, where positive descriptions can be interpreted many ways.

The frankx.ai brand voice guidelines use this structure throughout. For every positive instruction ("lead with results"), there's a corresponding negative anchor ("not spiritual language, not grandiose claims"). The combination produces more consistent output than either alone.

The FrankX Prompting Principles

After 74+ production prompts across the prompt library and 22+ ACOS skills, three principles consistently separate high-performing prompts from mediocre ones:

Specificity beats length. A 50-word prompt with exact specifications outperforms a 500-word prompt with vague guidance. Every word in a prompt should be doing work. If a sentence could be removed without changing the model's behavior, remove it.

Constraints beat instructions. Telling the model what to produce is weaker than defining the boundaries within which to produce it. Instructions describe an ideal. Constraints define a space the output must occupy. Constraints are more reliable.

Examples beat descriptions. If you can show the model what you want, show it. Don't describe the format — provide the format. One well-chosen example is worth 200 words of description.

These principles sound simple. Applying them consistently is the actual work. Most prompts fail not because the technique is wrong but because the specificity is insufficient, the constraints are embedded in prose instead of itemized, or there's a description where an example would serve better.

Production Prompts Are Skills

The highest-leverage insight from building the ACOS system: a production-grade prompt is a reusable asset, not a one-time input.

The prompts I use daily — for blog writing, code review, content atomization, deployment monitoring — are stored as skill files with defined inputs, outputs, and constraints. They get refined over time. When a prompt produces a bad output, I update the skill file rather than just rerunning with a tweak.

This is the ACOS approach to prompting: treat prompts as software. Version them. Test them. Refine them based on output quality over time. The prompt engineering workshop covers this approach in depth — specifically how to build a prompting system that improves rather than stagnates.

The alternative — writing prompts from scratch every time, not storing what works — is the equivalent of not writing functions in code. You're doing the same work repeatedly, and you're never accumulating leverage.

If you're building an AI-native system for your own work, the ACOS framework provides the architecture for organizing these prompts, skills, and agents into a coherent operating system rather than a loose collection of notes.

For a reference implementation of what production prompts look like across multiple domains — content, code, research, music — the frankx.ai prompt library has 74 prompts in active use, categorized by use case and model.

What to Audit in Your Current Prompts

If you have an existing prompt library or collection of prompts you use regularly, here's a rapid audit protocol:

Remove: "think step by step", "you are an expert", elaborate persona backstories, excessive politeness, constraint repetition.

Restructure: Move constraints out of prose into itemized lists. Add explicit schemas for any structured output. Replace descriptions of format with examples.

Precision-check: For every instruction, ask: is this specific enough that a junior team member could follow it without asking clarifying questions? If not, it's too vague.

Temperature-tag: Note the temperature appropriate for each prompt. Structured output prompts should be running at 0.0-0.3. Creative prompts at 0.7-0.9.

In my experience, a prompt audit using this checklist improves output quality noticeably on about 60% of prompts and dramatically on about 20%. The remaining 20% were already well-structured.

FAQ

Does chain-of-thought reasoning still have value if "think step by step" is dead?

Yes — but the mechanism changed. The technique of explicitly requesting step-by-step reasoning is less effective because models do it by default on complex tasks. What does still work: asking models to show their reasoning in a specific format, or asking them to reason about a problem in a structured section before producing output. The behavior is the same; the instruction needs to be more specific about what "showing reasoning" looks like.

How do I know if my prompt is too long?

A simple test: remove the last 20% of the prompt and test the output. If output quality is unchanged, that 20% was not contributing. Repeat until removing content degrades output. The remaining text is your minimum effective prompt. Most prompts have 30-50% content that can be cut without quality loss.

Do these techniques apply equally across Claude, GPT-4o, and Gemini?

Broadly yes, with model-specific tuning. Structured output schemas and explicit constraints work across all frontier models. The specific tokens that trigger good behavior vary — Claude responds well to explicit role and task framing, GPT-4o is particularly responsive to few-shot examples, Gemini tends to do well with explicit output length constraints. The underlying principles apply everywhere; calibrate based on which model you're running.

Are there cases where "you are an expert" still adds value?

Occasionally, when you need a specific epistemic stance rather than expertise. "You are a skeptical reader who has seen 100 pitches and is not easily impressed" — this works because it's specifying a perspective, not expertise. The persona frames how the model approaches evaluation, not what it knows. That's meaningfully different from "you are an expert marketer."

How often should I update my prompt library?

Model-triggered: whenever a major model version ships, re-test your top 10 most-used prompts. The improvement in instruction-following often means you can simplify prompts that included compensatory language for older models. Also update whenever output quality drifts — which is a signal that the model's defaults changed and your prompt is compensating for behavior that no longer exists.

This article draws from the frankx.ai prompt library — 74 production prompts maintained actively as frontier models evolve. The ACOS system is the framework that organizes these prompts, skills, and agents into a coherent operating system for AI-native creators.

Get Started

Build your first AI system

Step-by-step guide to setting up ACOS, creating your first agent, and shipping real products with AI.

Start buildingTemplates & Blueprints

Production-ready architecture

Download AI architecture templates, multi-agent blueprints, and prompt engineering patterns.

Browse templatesInner Circle

Join the builder community

Connect with creators and architects shipping AI products. Weekly office hours, shared resources, direct access.

Join the circleRead on FrankX.AI — AI Architecture, Music & Creator Intelligence

Stay in the intelligence loop

Weekly field notes on AI systems, production patterns, and builder strategy.

Continue Reading

Intelligence Dispatches13 min read

Claude, GPT, Gemini, DeepSeek: Which Model for Which Task?

Every major frontier model compared — architecture, capabilities, pricing, and which to use for coding, research, creative work, and enterprise deployment.

Read article

Intelligence Dispatches12 min read

DeepSeek R1: What Open-Weight Reasoning Actually Means

The model that disrupted AI pricing. How DeepSeek R1 works, where it excels, and what it means for builders choosing between open and closed models.

Read article

Workshops12 min

Create Music Without Musical Training: The Suno AI Music Creation Workshop

Transform your ideas into cinematic soundscapes, meditation music, and motivation anthems using AI. Learn the 5-Layer Prompt Architecture and frequency science for transformative audio.

Read article