DeepSeek R1: What Open-Weight Reasoning Actually Means

Intelligence DispatchesMarch 31, 202612 min read

DeepSeek R1: What Open-Weight Reasoning Actually Means

The model that disrupted AI pricing. How DeepSeek R1 works, where it excels, and what it means for builders choosing between open and closed models.

🎯

Reading Goal

You will understand DeepSeek R1 architecture, where it genuinely competes with closed models, and when to use it vs Claude or GPT.

Updated March 31, 2026: Added coverage of DeepSeek V3.1, V3.2, and V3.2-Speciale releases since R1.

TL;DR: DeepSeek R1 produces explicit chain-of-thought reasoning traces — visible, auditable, fine-tunable. V3 uses a 671B parameter MoE architecture with only 37B active per forward pass, achieving near-GPT-4o performance at a fraction of the cost. The open-weight nature means you can self-host, fine-tune on proprietary data, and run inference without per-token API fees. The tradeoff: no managed API, no SLA, and tool-use consistency lags closed models. For cost-sensitive production and research, DeepSeek changed the calculation permanently.

When DeepSeek dropped their V3 training cost figures in January 2025 — $5.6 million for a frontier-class model — it landed differently than most model releases. Not because it was the best model on every benchmark. It was better than that: it proved that frontier performance no longer required frontier-scale spending.

I've been running DeepSeek models in production alongside Claude and Gemini for several months now. Here is what actually matters about the architecture, where it holds up, and where the closed models still have an edge.

What Is the MoE Architecture and Why Does It Matter?

DeepSeek V3 is a Mixture of Experts model — 671 billion total parameters, but only 37 billion are active during any single forward pass. The MoE architecture routes each input token to a subset of specialized "expert" networks rather than running the full model every time.

This is not a new idea. Google used sparse MoE in Switch Transformer (2021) and Mixtral from Mistral AI brought it to the open-weight world in 2023. What DeepSeek did differently is the engineering execution: their expert routing is more granular, their training stability improvements (they call it "auxiliary-loss-free load balancing") allowed the model to train without the instability that plagues many large MoE systems.

The practical consequence: inference costs drop proportionally to active parameters, not total parameters. A 37B active parameter forward pass is dramatically cheaper per token than a dense 70B model — while the 671B total parameter pool gives the model the knowledge depth of a much larger system.

For builders thinking about cost at scale, this arithmetic changes everything. You can serve near-GPT-4-class intelligence at GPT-3.5-class compute costs.

How R1 Reasoning Works: Visible Thinking

DeepSeek R1 is the reasoning-specialized variant trained on top of V3. The defining characteristic is explicit chain-of-thought traces: the model outputs its intermediate reasoning steps before giving a final answer. These traces are not a UI trick — they are part of the actual token stream, visible in the raw API response.

This is architecturally significant for three reasons:

1. Auditable reasoning. When R1 works through a math proof or debugging task, you can read the steps. You can see where it went wrong, where it corrected itself, and why it reached its conclusion. Closed models like Claude Sonnet and GPT-4o may do extended thinking internally, but the reasoning is not fully exposed in the same unfiltered way.

2. Fine-tunable reasoning style. Because the reasoning traces are explicit text, you can fine-tune a distilled R1 variant on domain-specific reasoning patterns. A legal firm can train on case analysis reasoning. A research lab can train on methodology reasoning. The reasoning process becomes trainable, not just the final answer.

3. Distillation quality. DeepSeek released distilled versions (8B, 14B, 32B, 70B) trained on R1's reasoning traces. The 32B distill genuinely outperforms models of similar size on math and coding tasks because it learned how to reason, not just what to answer.

Benchmark Performance: Where It Wins, Where It Lags

On the benchmarks that matter for reasoning-heavy work — MATH-500, AIME, LiveCodeBench, SWE-bench — R1 competes directly with Claude Opus and GPT-o1. On MATH-500, R1 scores above 97%, comparable to o1. On AIME 2024 (the hardest standardized math competition benchmark available), R1 achieves scores that match or exceed o1-preview (now succeeded by o3/o4-mini).

Where the numbers tell a different story:

Instruction following. Claude Sonnet 3.7 (now Sonnet 4.6) and GPT-4o show better consistency on complex multi-step instruction following tasks — particularly when instructions involve nuanced constraints or require precise formatting. R1 occasionally loses track of constraints mid-reasoning.

Tool use and function calling. This is the most significant practical gap. Closed models — Claude especially — have spent enormous engineering effort on reliable, structured tool use. DeepSeek's tool-use consistency in agentic workflows is improving but still lags in edge cases. If your production workflow depends on reliable multi-step tool calls, this is a real consideration.

Multilingual performance. DeepSeek has strong Chinese language performance given the training distribution. English performance is genuinely excellent. Other European languages show more variability than GPT-4o.

Creative and open-ended generation. Claude Opus-class models still produce qualitatively better creative writing and nuanced prose. R1 optimizes for correctness in structured tasks, which sometimes makes creative outputs feel more mechanical.

The Cost Disruption: What the Numbers Actually Say

The training cost figure ($5.6M for V3) shocked the industry because it was about 10-20x cheaper than comparable closed model training runs estimated from available information. The inference cost disruption was more immediate.

DeepSeek's API pricing at launch: $0.27 per million input tokens for V3, compared to $3.00/M for GPT-4o and $3.00/M for Claude Sonnet. That is roughly an 11x cost difference for comparable task quality on coding and reasoning tasks.

For applications where you are processing large volumes of text — classification, extraction, summarization at scale — the math is straightforward. If you are spending $10,000/month on GPT-4o for document processing, the same workload on self-hosted DeepSeek V3 could cost under $1,000 in compute.

This is not hypothetical. I have moved several batch processing pipelines from closed APIs to DeepSeek distills running on local inference, and the quality difference on structured extraction tasks is minimal. The savings are real.

Practical Use Cases Where DeepSeek Earns Its Place

Self-hosted inference on proprietary data. This is the strongest case. Legal, medical, and financial organizations with data residency requirements cannot send sensitive data to US-based APIs. A self-hosted DeepSeek V3 instance on their own infrastructure changes the calculus entirely. Open weights means you control the deployment.

Fine-tuning on domain-specific reasoning. The explicit reasoning traces in R1 make it uniquely well-suited for domain adaptation. A system that reasons visibly is a system where you can identify where the reasoning breaks down for your domain, then fine-tune to fix it.

Research and evaluation workflows. When I am testing prompt strategies or evaluating whether a particular approach generalizes, running the experiments through DeepSeek distills locally is fast and cheap. I save the expensive closed-model API calls for final validation.

Air-gapped deployments. Any deployment where network access is restricted — on-premises enterprise, edge computing, regulated environments — open weights are the only path. DeepSeek's 8B and 14B distills run on consumer hardware.

Cost-sensitive batch processing. Bulk summarization, classification, entity extraction, code review automation. Tasks where quality needs to be "good enough" and volume is high. DeepSeek V3 sits at the quality level where "good enough" covers the majority of these tasks.

Where Closed Models Still Win

I want to be precise here because the nuance matters for architecture decisions.

Agentic workflows with tool calling. If you are building multi-step agents that use tools — web search, code execution, API calls — Claude's tool-use reliability is noticeably better. The difference shows up in edge cases: malformed arguments, unexpected tool responses, recovery from errors. For high-stakes automation, the reliability gap costs more than the price difference saves.

Complex instruction adherence. Multi-constraint instructions — "write in this voice, at this length, for this audience, avoiding these topics, formatted exactly like this" — Claude handles with more consistency. R1 tends to drop constraints as the reasoning chain gets longer.

SLA and support. Anthropic and OpenAI provide managed APIs with uptime guarantees, rate limit management, and support contracts. If you are building a production product where a model outage is a P0 incident, this infrastructure matters. Self-hosted DeepSeek means you own the reliability problem.

Safety and alignment tooling. Closed model providers invest heavily in constitutional AI, RLHF, and safety evaluation frameworks. DeepSeek's safety work is present but less mature. For consumer-facing applications where safety properties need to be auditable and defensible, this matters.

My Multi-Model Workflow

I run a tiered model strategy in production, and DeepSeek occupies a specific and permanent slot.

Claude Sonnet / Claude Opus — complex reasoning with tool use, multi-step agentic tasks, anything requiring deep instruction adherence, creative work, and client-facing output where quality is paramount. This is my primary working model.

DeepSeek R1 / V3 — batch processing, research and experimentation pipelines, cost-sensitive summarization and classification at volume, fine-tuning experiments, any workflow where I want to inspect the reasoning trace for debugging.

Gemini Flash — speed-critical tasks, real-time applications, anything where latency matters more than reasoning depth. Multimodal where I need both image and text in one call.

Local distills (14B/32B) — air-gapped workflows, local code analysis, private data processing, fast iteration where I do not want API round-trips.

The key insight: these are not competing tools — they are a portfolio. The question for any task is not "which model is best" but "which model is best for this specific combination of quality requirements, cost sensitivity, latency needs, and data privacy constraints."

DeepSeek changed the portfolio calculation. Before R1, the choice was essentially: pay for closed models or accept meaningfully lower quality. Now there is a genuine third path for many tasks. That is the disruption worth paying attention to.

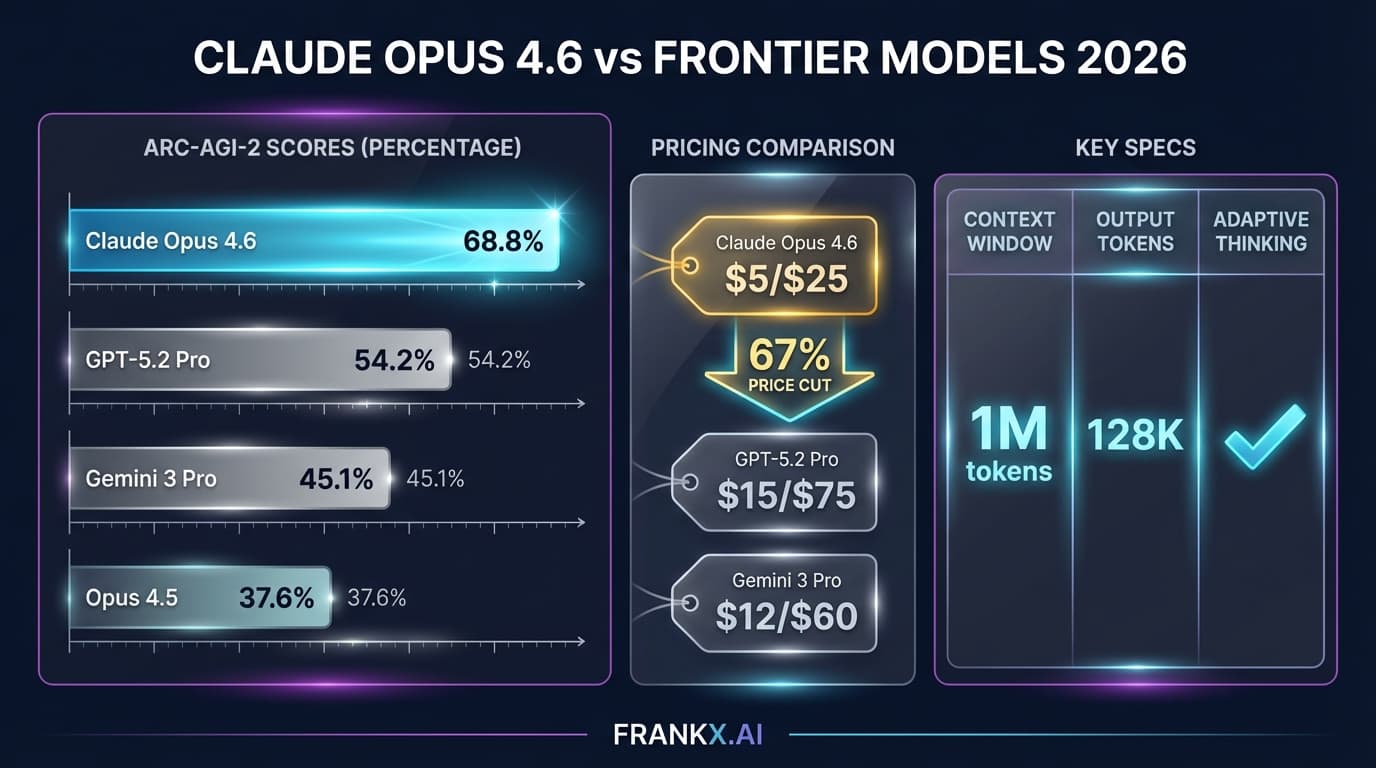

See how I think about the full frontier model landscape in 2026 and the state of AI research in my research hub. For a deeper look at how Claude specifically fits into production workflows, read my Claude Opus 4.6 analysis.

What Came After R1

R1 was the opening move. DeepSeek kept shipping.

DeepSeek V3.1 (August 2025) brought meaningful improvements to reasoning quality across the board. The MoE routing became more efficient, and the model showed stronger performance on multi-step problem decomposition — closing some of the gap with closed models on structured reasoning tasks while maintaining the cost advantage that made V3 compelling in the first place.

DeepSeek V3.2 (December 2025) was the more significant release. The headline: V3.2 integrates thinking directly into tool-use workflows, addressing what was arguably R1's most significant practical weakness. Earlier in this article I noted that tool-use consistency in agentic workflows lagged closed models — V3.2 was DeepSeek's direct response. The model now reasons through tool selection, argument construction, and error recovery as part of its chain-of-thought process rather than treating tool calls as a separate capability bolted on top. In benchmarks, V3.2 performs comparably to GPT-5 on agentic task completion, which is a substantial leap from where R1 stood.

DeepSeek V3.2-Speciale is a specialized variant optimized for specific deployment profiles — higher throughput at lower latency for production inference workloads. Think of it as the production-hardened variant of V3.2, tuned for teams running high-volume pipelines where every millisecond and every token of overhead matters.

The trajectory here is worth noting. In under a year, DeepSeek went from "impressive reasoning but unreliable tool use" to "competitive with the best closed models on agentic tasks." The open-weight ecosystem did not stand still while the closed labs iterated. For builders who wrote off DeepSeek for agentic work based on R1-era limitations, the V3.2 generation warrants a fresh evaluation.

Frequently Asked Questions

Can I run DeepSeek V3 on consumer hardware?

The full 671B V3 model requires significant infrastructure — multiple high-end GPUs with substantial VRAM. The distilled variants are more accessible: the 8B distill runs on an RTX 3090 or 4090 (24GB VRAM), the 14B on 2x 24GB GPUs, the 32B on 2-4x 24GB GPUs. For most individual builders, the distills are the practical path to local inference.

How does DeepSeek R1 handle context length?

DeepSeek V3 supports up to 128K token context. The reasoning traces that R1 generates can be verbose, which means complex reasoning tasks consume tokens faster than a direct-answer model would. In practice, for multi-document analysis and long-context tasks, this is generally fine — but budget for the reasoning tokens in your cost calculations.

Is DeepSeek safe to use for sensitive business data?

This depends entirely on your deployment model. Using DeepSeek's hosted API means your data passes through their servers, which are operated by a Chinese company and subject to Chinese law. For many businesses with data residency or sovereignty requirements, this is a disqualifier. The answer for those cases is self-hosted deployment using the open weights on your own infrastructure. Open weights make this possible in a way that closed models do not.

How does DeepSeek's training compare to what we know about GPT-4 and Claude training?

DeepSeek used reinforcement learning from verifiable rewards (RLVR) — training R1 on tasks where correctness can be automatically verified (math problems, code that compiles and passes tests). This is related to but distinct from RLHF. The approach is increasingly common for reasoning-focused models because it allows scaling reward signal without massive human labeling effort. Anthropic and OpenAI use related techniques in their reasoning models (o1, Claude's extended thinking). The key DeepSeek contribution is showing this can be done at frontier quality for a fraction of the compute budget.

When should I choose DeepSeek over Claude for production?

Choose DeepSeek when: (1) you need self-hosted deployment for data privacy, (2) the task is reasoning-heavy and cost at scale matters, (3) you want to fine-tune on domain-specific reasoning patterns, (4) you are running research pipelines where auditable reasoning traces are useful. Stay with Claude when: (1) the task requires reliable multi-step tool use, (2) you need managed API reliability and SLA, (3) instruction adherence across complex multi-constraint prompts is critical, (4) safety properties need to be auditable for a consumer-facing product.

DeepSeek did not replace closed models. It made the choice between open and closed a genuine engineering decision rather than a quality default. That shift in the calculation is what matters.

Get Started

Build your first AI system

Step-by-step guide to setting up ACOS, creating your first agent, and shipping real products with AI.

Start buildingTemplates & Blueprints

Production-ready architecture

Download AI architecture templates, multi-agent blueprints, and prompt engineering patterns.

Browse templatesInner Circle

Join the builder community

Connect with creators and architects shipping AI products. Weekly office hours, shared resources, direct access.

Join the circleRead on FrankX.AI — AI Architecture, Music & Creator Intelligence

Stay in the intelligence loop

Weekly field notes on AI systems, production patterns, and builder strategy.

Continue Reading

Intelligence Dispatches13 min read

Claude, GPT, Gemini, DeepSeek: Which Model for Which Task?

Every major frontier model compared — architecture, capabilities, pricing, and which to use for coding, research, creative work, and enterprise deployment.

Read article

Intelligence Dispatches13 min read

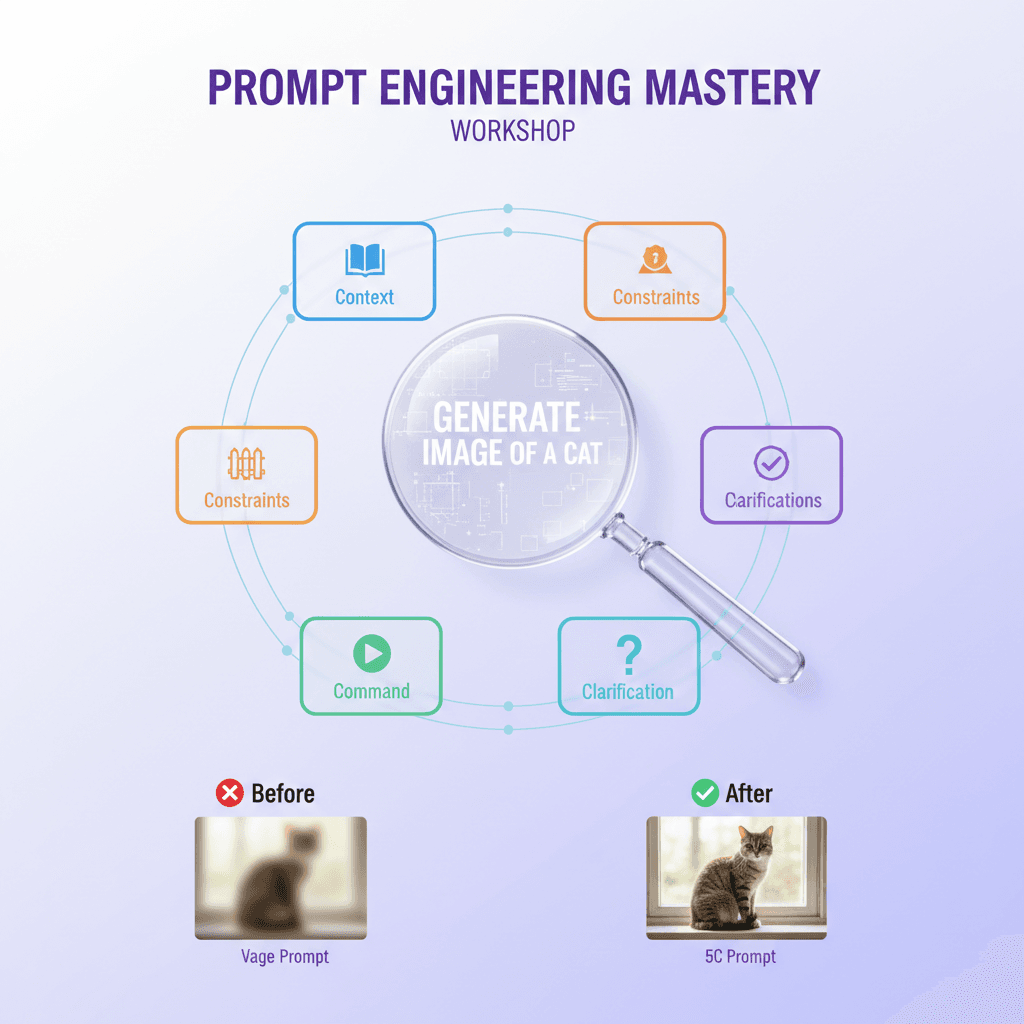

Prompt Engineering in 2026: What Still Works

The prompting techniques that survived the model upgrades — structured output, chain-of-thought, few-shot, and what to stop doing.

Read article